AI-driven search is changing how content ranks as well as how it gets discovered, interpreted, and reused in answers.

For years, SEO has been largely a discovery problem: Can users and crawlers find your content? Today, it’s increasingly an interpretability problem: Can AI systems understand what your content means, connect it to the right entities, and confidently use it in generated responses?

This shift doesn’t replace traditional SEO foundations, but it does change what makes content visible. Structure, clarity, and contextual signals now play a bigger role in whether your content is surfaced or overlooked.

The traditional content hierarchy was built to serve two audiences: human visitors navigating by intent and Googlebot following links.

The hierarchy AI systems rely on is built on structured signals from schema markup, entity associations, internal linking patterns, and information density. If your architecture was designed for the old model, it may be less visible or harder to interpret in this new context.

Rather than being a purely technical rebuild, this shift requires aligning content strategy and technical SEO so that systems can both understand and trust what they find.

In this article, we’ll explore a content optimization hierarchy designed for AI-driven search, working from the foundation up. Each layer builds on the previous one and highlights both strategic and structural considerations.

The model is structured into four layers:

- Layer 1: Machine interpretability

- Layer 2: Strategic topic clusters

- Layer 3: Information architecture & density

- Layer 4: Experience signals & E-E-A-T credibility infrastructure AI systems trust

Layer 1: Machine interpretability

Schema as structural language

Schema has always mattered for structured results: rich snippets, knowledge panels, breadcrumb trails. What’s changed is its role in AI Overviews and generative responses.

Structured data is not required for AI systems to understand content, but it can improve how reliably and accurately your content is interpreted, retrieved, and attributed.

A Search Engine Land study explored this by testing three identical pages with different schema implementations. Only the page with complete, well-structured schema appeared in AI Overviews.

The winning implementation wasn’t complex, it was complete: Article schema with all required fields, FAQ markup, breadcrumb navigation, correct date formatting, and clear author and publisher attribution.

While schema alone doesn’t determine visibility, it reduces ambiguity and helps systems interpret your content more consistently.

For technical teams managing large sites, this shifts schema from a “nice-to-have” to a clarity layer that supports downstream interpretation.

Entity markup and relationships

Beyond page-level schema, AI systems build understanding through relationships between entities: people, organizations, topics, and concepts.

Making these relationships explicit helps systems connect content across your site more effectively.

From a practical standpoint, this means ensuring that authors, topics, and related content are consistently connected, both structurally and contextually.

For audit purposes, crawl data can help identify gaps at scale:

- Pages missing key schema types (

Article,BreadcrumbList,Person) - Inconsistent author attribution

- Disconnected entity relationships across templates

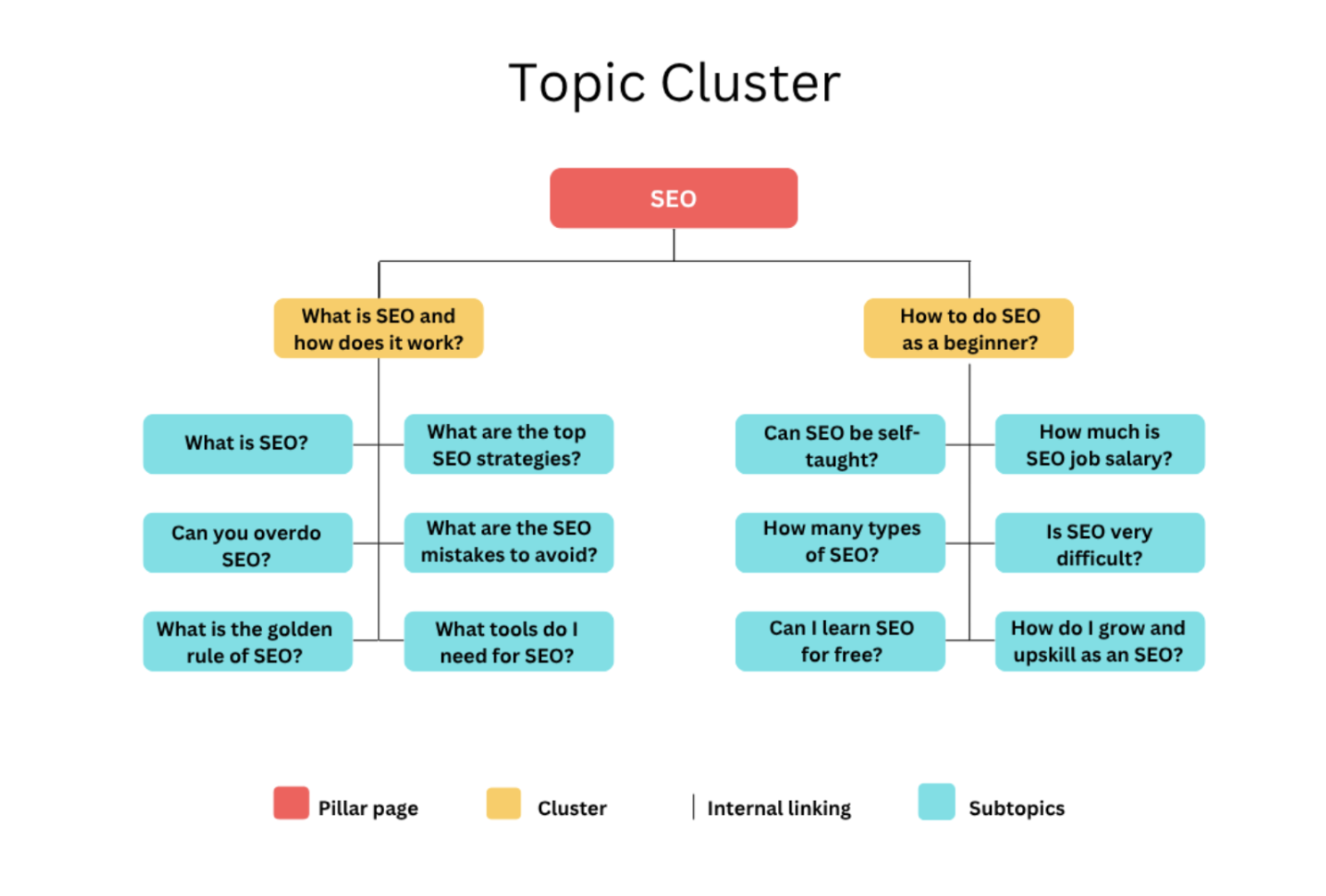

Layer 2: Strategic topic clusters

Topic clusters have been a content strategy staple for years. What’s changed is what they signal.

A well-linked cluster aids in navigation and also serves as evidence of topical authority.

AI systems likely evaluate patterns such as:

- Whether pillar pages link to supporting content

- Whether supporting pages reinforce each other

- Whether linking remains consistent across the cluster

The pillar-cluster model still holds. Pillar pages are high-level resources that cover a comprehensive theme at depth – “technical SEO” or “log file analysis” for example – while cluster content addresses specific questions, use cases, and subtopics.

The key linking requirement hasn’t changed: subtopics should link to the pillar, and the pillar should link to subtopics.

What has changed is the diagnostic bar, the standard required to demonstrate authority. For traditional search, a cluster could function reasonably well with inconsistent internal linking. For AI-driven search, gaps in the linking pattern are more consequential. They interrupt the chain of authority signals the system uses to assess topical depth.

When auditing cluster strength, look for pillar pages with thin outbound internal linking, cluster pages that only link to the pillar but not to related cluster content, and orphaned pages sitting within a topic domain but structurally disconnected from it.

At scale, identifying these structural gaps manually becomes difficult, but this is where crawl-based analysis can support content strategy.

By analyzing internal linking patterns across templates and sections, platforms like Oncrawl make it possible to surface weak cluster connections, orphaned pages, and inconsistencies in how topics are structured.

For content teams, this creates a bridge between strategy and execution: instead of defining topic clusters in theory, you can validate whether they actually exist, and function, as intended across the site.

Layer 3: Information architecture and density

AI systems don’t read content the way humans do and they don’t process it the way traditional crawlers do. They extract, chunk, and reconstruct it, synthesizing across sources to produce answers.

Content that’s structured to support that process is content that gets used.

In this context, information density becomes very important. Information density refers to how much specific, usable, and verifiable information a piece of content contains relative to its length.

High-density content includes:

- concrete data points

- named entities (people, tools, concepts)

- clear definitions and explanations

- original insights or examples

Low-density content tends to rely on generalizations, repetition, or high-level summaries without adding extractable value.

Thin content that describes concepts without providing concrete information gives AI systems little to work with. In contrast, content with specific, verifiable details provides material that can be extracted and attributed.

Content chunking (short paragraphs, clear subheadings, modular sections that stand alone) has always improved readability.

No one wants to plough through 2,000 words of unbroken prose to find the one answer they need.

However, the structural argument now goes beyond readability. A well-chunked article is also easier for AI systems to extract from.

From a structural perspective, this translates into clear HTML hierarchy, well-defined sections, and content blocks that can stand alone when extracted.

The multimodal dimension is increasingly relevant as AI systems now also process images, video, and audio alongside text. Alt text, video captions, and audio transcripts, which were initially integrated to meet accessibility requirements, also serve as the structured text layer that makes non-text content interpretable.

A page with a well-captioned video and a transcript is richer to an AI system than a page with the same video and no supporting text.

Layer 4: Experience signals & E-E-A-T

E-E-A-T is often treated as a content quality checklist, but that’s a simplification.

It’s better understood as a conceptual framework that reflects how search systems evaluate credibility.

Rather than being a set of direct signals, E-E-A-T reflects the types of signals systems attempt to approximate through ranking, retrieval, and entity understanding.

This becomes more visible in AI-driven search.

Systems like Google’s AI Overviews, ChatGPT’s browsing features, and platforms like Perplexity assemble answers from content that ranks highly and is consistently reinforced across trusted sources. The underlying signals remain largely the same.

Much of this credibility is inferred from the broader web ecosystem: links, mentions, reputation, and consistency across sources.

Technical elements, such as structured data, can support interpretation—but they do not replace these external signals.

Breaking down E-E-A-T in practice

Experience

AI systems favor content that demonstrates firsthand knowledge. Case studies, original data, and detailed documentation provide verifiable signals of real-world experience. Content that merely summarizes or rephrases existing information tends to be weaker on this dimension.

Expertise

Expertise is reflected in the depth, accuracy, and consistency across content. Structured author information can support disambiguation, but expertise is primarily inferred from the content itself.

Authoritativeness

Authority emerges from external validation. Being cited, referenced, or linked to by other trusted sources in the same domain remains one of the strongest signals. This is both a content outcome and the result of visibility, reputation, and relationships within an ecosystem.

Trustworthiness

Trust is built through factual accuracy, transparency, and alignment with widely accepted information. Systems are more likely to surface content that is consistent with other reliable sources and less likely to rely on content that contradicts established knowledge without strong supporting evidence.

Structured data and attribution help reinforce clarity, but they function as supporting signals rather than foundational ones.

Build for humans, optimize for AI

The hierarchy isn’t a checklist to work through once. It’s an architectural model where each layer enables the next one.

Machine interpretability enables AI systems to read your content. Topic clusters establish topical authority. Information density gives AI systems something worth extracting. E-E-A-T signals give them a reason to trust it.

The brands that will lead in AI-driven search are already doing both: creating original, authoritative content grounded in real-world experience and structuring it in ways AI can understand and cite.

In this new era, relevance isn’t just about ranking, it’s about becoming the source AI wants to quote and users want to trust.

Build for people. Optimize for AI. Do both well and you future-proof your content.