Your site's technical SEO determines what gets seen

Audit and optimize how traditional and AI search engines view your site

Get the data

to support your technical SEO decisions

What Oncrawl offers

Crawl data

Run comprehensive audits of your site's structure, crawlability, indexing signals, and internal linking.

Run comprehensive audits of your site's structure, crawlability, indexing signals, and internal linking.

Log data

Analyze server logs to see how Googlebot, Bingbot, and AI bots interact with your site.

Analyze server logs to see how Googlebot, Bingbot, and AI bots interact with your site.

Technical monitoring

Monitor the technical factors that influence crawl efficiency and indexing.

Monitor the technical factors that influence crawl efficiency and indexing.

Put your technical SEO data to work

Audit and monitor your site's technical health

Your team shouldn’t have to find out about technical problems from a traffic drop.

- Run technical SEO audits across complex sites.

- Surface crawlability, indexing, and rendering issues systematically.

- Monitor technical health over time and detect regressions early.

- Use custom data points alongside standard metrics to match your workflow.

See how bots actually crawl your site

Your log files show what search engines and AI systems actually do on your site.

- Monitor how Googlebot, Bingbot, and other crawlers navigate your site.

- Track AI bot activity on your site.

- Identify wasted crawl budget and inefficient crawl paths.

- See which pages search engines prioritize and which they ignore.

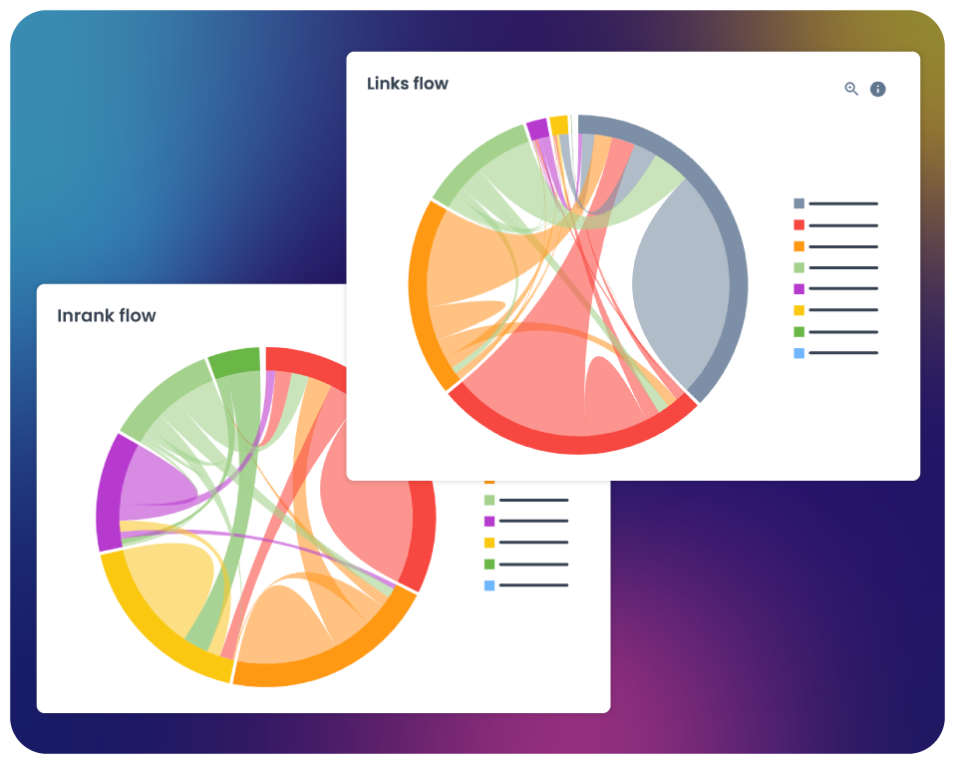

Strengthen your site architecture and internal linking

Spot architectural weaknesses that limit how effectively search engines navigate your site.

- Find orphan pages and sections with weak or broken internal links.

- Analyze how link equity is distributed across your site’s architecture.

- Identify structural bottlenecks where important pages are buried too deep.

- Ensure your internal linking strategy supports both crawl efficiency and content prioritization.