Pubcon is one of the key search events in the US every year, and hosts the US Search Awards ceremonies. Pubcon 2019 in Las Vegas drew excellent speakers and talented audiences.

In case you couldn’t attend, or if you’ve missed a session you wanted to attend, we’ve collected some of the top reactions and best takeaways from a selection of conferences.

Google Keynote – Gary Illyes

Mining server log data for SEO benefits – Jackie Chu

International content and multilingual keyword research – Motoko Hunt, Michael Bonfils, Keith Goode

On-site search optimization – JP Sherman

Keynote: Deep Learning at Microsoft’s Bing – Maria Kang and Frederic Dubut

International SEO – Aleyda Solis and Patrick Stox

Advanced SEO Site Auditing – Bill Hartzer

Managing global SEO performance – Bill Hunt and David Iwanow

Keynote: Best Practices in SEO – Nathan Johns

Merging Psychology and Content Creation – Michael Bonfils, Rebecca Murtagh

Keynote: Crawling Best Practices and New Abilities from Bing – Fabrice Canel, David Schachter

SERP Analysis and Content that Ranks – Viola Eva, Roger Montti

In-house Content Strategy – Keith Goode, Topher Kohan

Man vs Machine: How to Remain Relevant in an Increasingly Automated World – Navah Hopkins

In-house SEO: Managing Teams and Departments – Peter Leshaw, Matt Cullen

Google Keynote – Gary Illyes

Gary Illyes’ keynote touched on a lot of the subjects that have marked the SEO world in 2019, from the recent noindex changes to the series of indexing issues between April and June. Here’s what Twitter thought were the best takeaways.

Noindex in robots.txt:

- The vast majority (99.99%+) of websites that were using noindex in robots.txt were using it incorrectly, and many were ‘mom and pop’ businesses accidentally noindexing important content. One of the reasons they deprecated noindex in robots.txt. (source)

- “Percentage of sites that were shooting themselves in the foot with noindex directives when parsed from robots.txt: >99.999%” (source)

- Open source robots.txt parser available on Github (source)

Google’s indexing problems:

- In April of 2019, Google lost parts of their index. Shortly after that they had a completely urelated rendering failure. Some of the highest profile urls in the index were swapped out for random lower quality ones. (source)

Hidden content and featured snippets:

- Gary Illyes says the content in tabs or other hidden sections of the site can be pulled into snippets but the keyword searched may not be bolded in the SERP. (source)

- If you hide content with CSS and you tap to reveal it – previously Google was not using that content for retrieval. The web has since evolved & if you hide something with JS that is executed w rendering, it’s fine – the hidden content is indexable. (source)

Links: rel=sponsored and rel=ugc:

- Q: Do rel=sponsored and rel=ugc change the flow of PageRank?

A: No. They carry extra information and that’s the only difference. (source) (source) - Rel=sponsored and rel=ugc are optional and you don’t have to use them. Google would like it if you implement them to help them understand linking patterns and how people are linking across the web. (source)

- People will always find a way to abuse things (source)

- Google introduced a few new #link tags to better understand linking and linking patterns. (source)

- Links are very important for Google to understand what’s happening on the web. Say, CNN is linking fake news with nofollow. Then Google can’t see what’s there if they obey nofollow. (source)

Javascript:

- React and other frameworks — go light with them and prerender because they are heavy for search engines. (source)

Core updates:

- Core update tips:

– don’t focus on core updates

– Google is just fine tuning algorithms to offer more relevant & higher quality content

– doesn’t mean your content sucks

– another page/site whose content is higher quality & more relevant to users’ queries (source) - Core updates are about content. A core update rankings drop just means somebody else’s content is more relevant than yours for the query. There’s no one issue to fix. (source) (source)

- Core updates are not punishing domains (like old algorithm updates) and therefore there is no concept of “winners” or “losers” in core updates (source)

Header tags vs formatting:

- H tags. Headings are headings to Google, whether styled with CSS or marked up with semantic HTML. But they are necessary for accessibility / screen readers for sure! (source) (source)

Snippet text:

- Google is now recognizing code to allow you to control what data from your site that they are allowed to use for snippets. (source)

- Limiting snippets could cause negative impact on site if it influences clicks. Snippets use click data to know if it’s helping users. (source)

- “Our snippeting algorithm is optimized for clicks” so sometimes (but not always) the Google-generated snippet may generate higher clicks. (source)

Webmaster conferences:

- In 2019 Google reached with Webmaster Conference:

– 12 languages

– 35 locations

– over 5000 people

(source) - Google’s new webmaster conferences will be in cities where there are not currently good SEO conferences. (source)

Mining server log data for SEO benefits – Jackie Chu

Jackie Chu gave a high-level introduction to using server log data for SEO. She showed concrete examples of SEO insights you can draw from log files, gave a demo of using Bing Webmaster tools for SEO tricks, and shared her enthusiasm for log analysis in general. Here are some of the best tips from her presentation:

.@jackiecchu dropping log file analysis knowledge bombs today and I’m in heaven. #womenintech #pubcon pic.twitter.com/rgdxl5fkGn

— Jennifer Wright (@loveduke) October 8, 2019

- Server log analysis and subsequent visualization can help the 65% of people who are visual learners. (source)

Pruning:

Great tips for removing pages from your site to benefit crawl budget. #pubcon pic.twitter.com/XFpJBXjWVY

— Candice Lyna (@candicelyna) October 8, 2019

- Pruning pages to improve crawl efficiency (source)

- Do you really need all those topic pages? Quick way to cut low value pages (source)

- When pruning, if the link has value, 301 the link to the closest page. (source)

- “I love getting rid of pages” pruning useless content chaff is critical to optimizing crawl budget & rankings (source)

Crawl budget:

- Google spent 5 BILLION on crawling…in 2014!!! That could be up to 14 Billion now. But no worries…your CSR website is totally fine… (source)

- Use server logs to identify rate limiting. (source)

- Server log data can be used to determine how quickly Google is able to crawl pages. You can see the time stamp difference between crawls. (source)

- “When I first look at an enterprise site, creating new content is rarely at the top of my priority list.” (source)

- Reduce avg. request time to improve crawl budget (source)

- Compelling data on country/language variations of pages vs language-only pages from @jackiecchu – consolidated 6 different versions of EN pages (/us/en; /ca/en; /in/en, etc) into one /en/ subfolder to improve crawl rate, saw immediate step-up improvements in rankings (source)

- Reevaluate use of Locale / Lang to improve crawl budget (source)

Bing’s Webmaster Tools:

- Walk-through on subfolder index reporting (source)

- Use Bing index explorer (under reports & data) for some crawl data. See all the folders it’s discovered. Useful if you don’t have any log data access (source)

International content and multilingual keyword research – Motoko Hunt, Michael Bonfils, Keith Goode

Motoko Hunt, Michael Bonfils, and Keith Goode spoke about international content and keyword research.

@motokohunt Pro Tip for International SEO – Baidu does not render JavaScript!!! So if you are competing in the mainland China market, you need to keep important info in the html #pubcon

— Callum Scott (@mrcallumscott) October 8, 2019

On-site search optimization – JP Sherman

JP Sherman presented an overview of on-site search: why it matters and how to optimize it. On Twitter, there was a lot of agreement on his observations:

How can you improve your onsite search? These are the five ways. @jpsherman #pubcon pic.twitter.com/iwNnoifbI4

— Casie Gillette (@Casieg) October 8, 2019

Five ways to improve on-site search:

- Change the snippet

- Change the SERP

- Measure

- Add context

- If it doesn’t work on mobile don’t do it… (source)

Failure is fun! Always be experimenting @jpsherman #Pubcon pic.twitter.com/tvZYguV30I

— Brian McDowell (@brian_mcdowell) October 8, 2019

Addressing content:

- Measure content efficacy to figure out which content you want to kill… (source)

- As SEOs, you know how to improve search results already. These things we already do improve site search (descriptions, keywords, etc.). (source)

User intent and behavior

- “Users are weird and not super predictable.” (source)

- Location can influence user intent for the same keyword. For example, “bike tires” can mean “road bike tires” in one place and “mountain bike tires” in another location. A curated knowledge graph can disambiguate user intent when they’re searching (source)

Keynote: Deep Learning at Microsoft’s Bing – Maria Kang and Frederic Dubut

Deep learning is a topic that comes up reguarly when discussing search engine technology related to understanding what humans want and how they search. We’ve heard about it from Google a few years ago; at Pubcon, we heard the latest from Bing.

Maria Kang and @CoperniX great presentation at #Pubcon of usage of Machine Learning at @bing …. and yes the internet is made of cats. pic.twitter.com/kwRSgjafsJ

— Fabrice Canel (@facan) October 8, 2019

SEO fundmentals at Bing:

- Less keywords more intent… SEO fundamentals are here to stay but search engines are made by humans for humans (source)

- Bing has its own set of what Google calls E-A-T called QC: quality and credibility – which is used by human raters to help Bing’s algorithm learn from their feedback. (source)

- “Search is made by humans for humans” (source)

Deep learning:

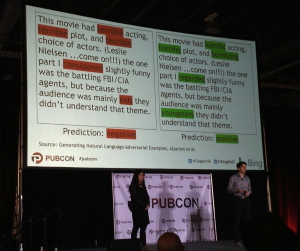

- Lots of engineering is still at play to avoid fooling the algorithms with intent stuffing the way search engines were fooled by keyword stuffing. Just a slight variation in copy fooled the system’s prediction output in this example (source) (source)

- Reference to Hamlet Batista’s article “Automated intent classification using deep learning” (source)

- Machine learning to generate “intelligent answers” on Bing, similar to “featured snippets” on Google… (source)

- 7 tools to help you play with NLP (source)

International SEO – Aleyda Solis and Patrick Stox

You can find Patrick Stox’s slides for “International SEO: The Weird Technical Parts including misconceptions, things you can get away with, and how to troubleshoot” and Aleyda Solis’s slides for “International SEO: Top Do’s and Dont’s” on Slideshare.

I always learn something new from @patrickstox 👏 so knowledgeable and experienced in complex enterprise level scenarios #pubcon pic.twitter.com/PlCTs7Wf62

— Aleyda Solis 🕊️ (@aleyda) October 8, 2019

Advanced SEO Site Auditing – Bill Hartzer

Bill Hartzer does more than 100 SEO audits annually, on mini-sites to sites with over 75 million pages. He shared some of the takeaways from this experience at Pubcon:

Common mistakes (source) (source):

- http/s mismatches

- Internal linking in mega menus

- Duplicate content

- Poor site structure

- Orphan pages

- Bad links

- Over-optimization

#Pubcon looks like @OnCrawl is a popular tool @bhartzer uses for his seo audits to find old orphaned content left on the server that are still receiving traffic from Google and Googlebot pic.twitter.com/RWDjfhWmZM

— iwanow@aus.social (@davidiwanow) October 8, 2019

Recommendations:

https://twitter.com/SimonHeseltine/status/1181693805021413377?s=20

- Focus on making an impact when looking at an audit. It’s OK to ignore some issues! (source)

- Scale & prioritization of issues certainly depends on size of site and potential impact of issues and potential lift in fixes. (source)

- What do title tags look like? What’s on the last page, is anything excluded? (source)

The Advanced SEO Site Auditing presentation from Bill Hartzer (@bhartzer) at Pubcon Las Vegas 2019 really reaches out and grabs you #BillHartzer #pubcon #seoaudit #seoauditing #pubconlasvegas #pubconlv #pubconconference pic.twitter.com/UVx8kmEsq9

— Ken Vitto (@Ken_Vitto) October 8, 2019

Managing global SEO performance – Bill Hunt and David Iwanow

Bill Hunt and David Iwanov talked about localization for SEO, and steps to avoid international SEO errors we commonly see. They used examples from different regions to cover both single- and multi-language international SEO strategies.

International SEO can get crazy local and granular when it come to usage of some terms. These nuances are ones that Google translate misses! #Pubcon @davidiwanow pic.twitter.com/hZcdzBZjhf

— John Morabito (@JohnMorabitoSEO) October 8, 2019

Things to consider when expanding into international markets (source):

- Don’t refuse to translate it because most of your audience understands it

- If you’re just Google translating it, you are doomed to fail

- If you are not localising your content, you are likely missing the mark

- Consider using data from internal sources such as Google Analytics or GSC

- Google Trends can also be useful for highlighting regional bias

- Engage with your local team for insights you can’t find in SEO tools

- Doing localised content is harder, but doing it right is worth it

- Localised content has a better chance of success in SEO

- Localised content has a higher engagement and conversion rates

- Make sure you have suitable budget/investment to support you

Recommendations:

- Sometimes an opportunity for international is around secondary languages spoken in a given geographic region. (source)

- One great option for hreflang is to add the http headers to each page if you have a great development team. Bill Hunt has a preference for putting them into the xml sitemap (source)

- Broken xml sitemaps won’t be indexed (source)

Great survey data from @davidiwanow about what happens when US users see https://t.co/eSA2UHbuJ7 in a search. #Pubcon pic.twitter.com/sp5mILkNiP

— John Morabito (@JohnMorabitoSEO) October 8, 2019

Keynote: Best Practices in SEO – Nathan Johns

Include SEO early.

– website design and dev

– site content and structure

– biz dev

– market research

Think of SEO early and often. @nathanjohns #Pubcon— Jennifer Slegg (@jenstar) October 9, 2019

Advice from Google:

- How to get #SEO help from Google: (source)

– YouTube channel: Google Search News (new) and Webmaster Office Hours (live Q&A)

– #AskGoogleWebmasters on Twitter

– Webmaster Help Forums – given on slide as g.co/webmasterforums

– Google Webmaster Conference - Nathan encourages everyone to take as many codelabs as possible. You can learn a ton from them. (source)

- Submit spam reports. They are always looking at new ways of spam. Every spam report gets looked at. (source)

- Q. Do we need to worry about links not reported in GSC?

A: Treat the links in GSC as canonical. Don’t need to worry about links if they are not showing up in GSC, you don’t need third party tools.

(source)

Site speed:

- Average mobile page speed last year… 15 seconds, down from 22, but still terrible (source)

The importance of content:

- Unique and compelling content. Content is king. Content is super important. Content helps users understand what your website is about and helps search engines serve that site to users. (source)

Schema.org and structured data:

- FAQ Schema might not be great for conversion rate, but it helps satisfy the user and help keep them coming back. It’s a win for the user and they’re going to remember that you helped them. (source)

- Don’t use FAQ structured data on pages where questions or answers can be user generated or modified. (source)

- Structured data shouldn’t be focused on converting users it should be about satisfying the users… How-to schema has 2 kinds: standard & rich results with images. Don’t use for recipes and only available on mobile (source)

Ways to remplace noindex directives in robots.txt (source):

- Noindex in HTML or header

- 404 or 410

- Password protection

- Disallow in robots.txt

- URL removal tool in GSC

Darn, @nathanjohns isn't at liberty to say what new features are coming to search console, including ones from old GSC. @nathanjohns #pubcon

— Jennifer Slegg (@jenstar) October 9, 2019

Merging Psychology and Content Creation – Michael Bonfils, Rebecca Murtagh

Understanding your audience:

- Michael Bonfils recommends using https://crystalknows.com for user profiling and getting to know your audience. (source)

- Get great character traits for your audience. Kinda like Cambridge Analytica but less creepy. (source)

@michaelbonfils Showing us different typical personas and how different they are. Red, blue, green and yellow. Which one are you #Pubcon? Can't wait to put my hands on those slides, the content is amazing!

— Pascal Côté (@Axxurge) October 9, 2019

Creating content:

- “If you’re able to create content that answers to a specific MOTIVE, you’re most probably going to drive people to it.” (source)

Keynote: Crawling Best Practices and New Abilities from Bing – Fabrice Canel, David Schachter

The keynote by Bing was a great reminder of the importance of other search engines in the world of SEO today. Bing’s usage, as well as its abilities and tools, have grown in recent years.

URL and content submission API:

- Bing is testing out giving very large websites access to a content submission API, which would let those sites get their content indexed immediately without Bingbot needing to be pinged to come crawl the URL (source)

- Huge news from @bing their brand new real time content API they are piloting bypasses Bingbot when publishing new content. (source)

- One of the sites using this is LinkedIn, and new content can be indexed within minutes (instead of days). The result is that Bing now has the most up to date version of hundreds of thousands of LinkedIn profiles (source)

Direction of growth at Bing:

- What Bing is hoping to do, and how it aligns with what SEOs want to do (source)

– Maximize search index comprehensiveness

– Maximize search index freshness

– Maximize content intelligence

– Clean the index - Bing is growing, now with over 1/3 of the market share in the US (source)

Bing has more search engine share than most think.@facan explains how the goals of his team @BingWMC align with the goals brands have for #SEO

@Pubcon Pro Las Vegas pic.twitter.com/cgWZg5AoI3— Rebecca Murtagh 💡 (@VirtualMarketer) October 9, 2019

Support for Javascript with a new evergreen bot:

- JS, a major announcement from @Bing New evergreen Bingbot is Microsoft Edge (source)

- Bing announces major overhaul of Bingbot to handle JS better than before, increasing compatibility significantly. (source, with before and after photos)

SERP Analysis and Content that Ranks – Viola Eva, Roger Montti

Viola Eva and Roger Montti talked about content and keywords for SERP success. Their clever turns of phrase impressed the Twitter audience:

When you play cards with Google, Google is already showing their hand (just look at the SERPs) Viola Eva #pubcon944

— Bill Slawski ⚓ 🇺🇦 (@bill_slawski) October 9, 2019

Your old content is not all washed up, fresh content is important, but freshening old content may drown you @martinibuster says at the 2019 Las Vegas Pubcon#pubcon #rogermontti #seo #SearchEngineOptimization #pubcon2019 #pubconlasvegas #seoranking pic.twitter.com/MgtYrMDCBh

— Ken Vitto (@Ken_Vitto) October 9, 2019

#pubcon SEJ writer Viola Eva urges optimizing for contextual keywords. What are they? “words that naturally appear in a conversation” pic.twitter.com/qx8BiUAKa1

— SearchEngineJournal® (@sejournal) October 9, 2019

In-house Content Strategy – Keith Goode, Topher Kohan

Keith Goode and Topher Kohan gave a ton of amazing advice on content strategy.

I guess @Topheratl is SEO Santa Claus? #Pubcon pic.twitter.com/ak8A2ZagGd

— Joe Youngblood (@YoungbloodJoe) October 9, 2019

Recommendations from Topher Kohan:

- Identify what content google is showing for your query’s search results and then fill that gap. (source)

- Don’t push on content creators too hard. Burn out sets in, quality drops off, it’s no longer fun for them. (source)

- “Track all your data, and show your content creators that people are actually viewing their stuff, they love that” (source)

- “Always be marketing your product internally” (source)

- “Be ruthless, try to destroy your competitors. If you’re not thinking that way, I guarantee they are.” (source)

- Your site can give context to what a user wants and that’s important. (source)

The example of the [weather] search:

- 75% of people who check the weather check the second source because they don’t trust the first source (source)

- Most of the traffic to http://weather.com weather + (modifier/location). It’s in the results but people still click because they want more context. Is it going to be hot, cold, or is it going to rain/snow. (source)

- The #1 search query for The Weather Company is “weather + {modifier}” but Google shows the weather, so why would they click? (source)

Insights from Keith Goode:

- Match your site experience with the user journey. (source)

- Businesses should obsesses over the journey and the story and not the keyword data” (source)

- The more specific the search, the lower the search volume, but the more likely they are to purchase. (source)

Man vs Machine: How to Remain Relevant in an Increasingly Automated World – Navah Hopkins

Watching @navahf break down what to own and what to automate— probably one of the best sessions on this topic! 👏🏼👏🏼👏🏼 #Pubcon pic.twitter.com/tGqHDQT488

— Purna Virji (@purnavirji) October 9, 2019

[Case Study] Increase visibility by improving website crawlability for Googlebot

Some of Navah Hopkins’ insights included:

- Think about local variations in keywords using tools like Google trends (source)

- PPC insights: “I highly recommend opting into DSAs at the campaign level, not the ad group level.” (source)

- TV ads have a greater influence on consumer intent as compared to print. (source)

- User original, entertaining and relevant content to differentiate your brand–not technical optimizations. (source)

- Match types in GoogleAds are now more of a signal (source)

How do we stay relevant as PPC service providers with the rise of automation with @navahf #Pubcon pic.twitter.com/dA20z9Gan7

— Robyn Johnson (@AMZRobynJohnson) October 9, 2019

In-house SEO: Managing Teams and Departments – Peter Leshaw, Matt Cullen

Here are their recommendations.

Hiring:

- Make sure you ask for specifics when interviewing SEO candidates. What are the exact steps they take to complete tasks? Don’t let them generalise (source)

- Good talent can take 6 month to over one year! (source)

- “Don’t settle for general answers when you’re asking specific questions.” Dodging questions is never a good way to perform an interview. (source)

- SEOs should be aware of legal terms such as “Fair Use” and other licenses related to content distribution and publication. (source)

- When hiring SEOs for In-house roles, make sure the have the skills to work with other teams such as Devs, Legal, UX, etc. (source)

- When hiring a good sEO, make sure they’re able to talk to IT specialists, either on the client-side or internally. Things like log analysis and site security may require specific technical knowledge. (source)

- A good SEO should also have some knowledge of reputation management and social media. Often more used when doing linkbuilding, this knowledge can be used to catch things that may have slipped past the PR team. (source)

Creating culture and nurturing teams:

- “Culture of Testing” – Always be testing, whatever you’re doing, it can be optimized, automated or improved. (source)

- Getting the ‘suits’ on your side as an SEO is paramount! (source)

- Stay on top of the industry. Read the blogs/books published by industry experts for their POV and bring your team to conferences for continued education (paraphrased) (source)

This is the book @peterleshaw referenced – GREAT READ Hiring Talent: Decoding Levels of Work in the Behavioral Interview

by Tom Foster #Pubcon https://t.co/e5Gs7KOErE— Brian McDowell (@brian_mcdowell) March 7, 2019