Are you a seasoned Oncrawl user who navigates the platform like the back of their hand? Or, have you recently partnered with us and you’re looking for the top insider tips to get you started?

As a member of the CSM team here at Oncrawl, I have a particular view on the features that I’ve noticed are underused but provided tremendous value.

Regardless of your situation, here is a round-up on my top 5 underrated Oncrawl features that you may have overlooked or you just don’t know about yet!

Data scraping & custom fields

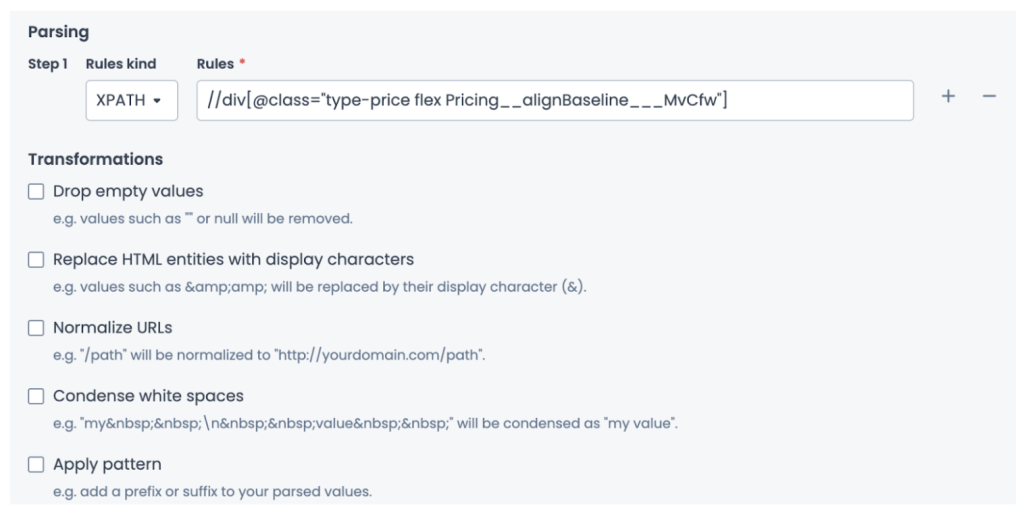

A brief introduction to custom fields at Oncrawl; we offer two methods for scraping, XPath and Regex. Before going any further, if you need a deeper look at web scraping, which is data extraction from websites, check out our resource on scraping that reviews its useful functions with relevant examples.

Now, the custom fields feature may not necessarily be ground breaking, but what is incredibly useful is the option to mix both Regex and XPath rather than being limited to only one method.

To put it simply, Regex and XPath are methods to find specific things in a whole lot of data. Rather than doing individual searches for text, you can do a search based on a pattern.

Let’s say you want to identify the month in this text, “August 5”, rather than searching for “August”, with Regex you can use \w+ to pick up the word character while XPath lets you display text elements from an XML or HTML document.

Or, let’s say you want to display the content within a div element, you’ll start off with //div[@class and finish with the class name. In some cases you just want to display the content, in others you want to identify a specific pattern, but sometimes you need a mix of both.

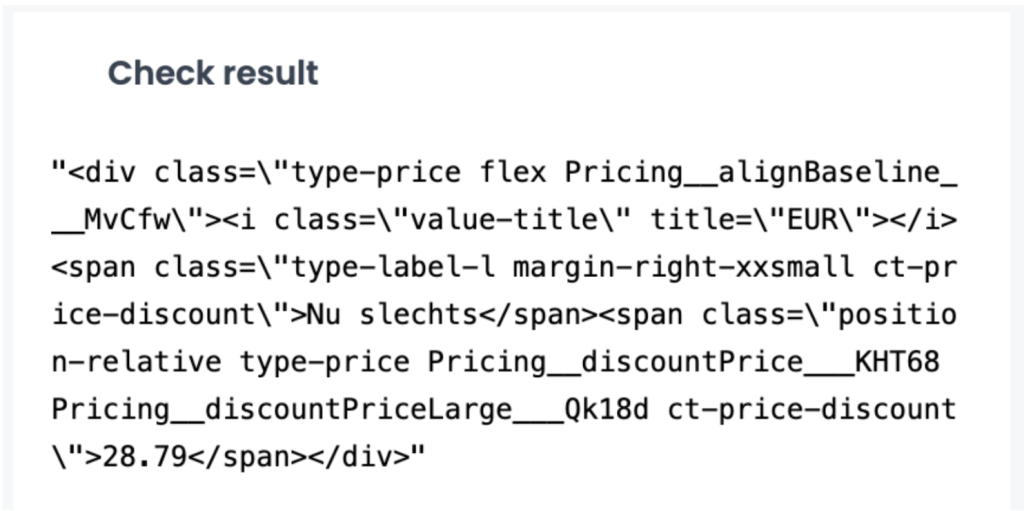

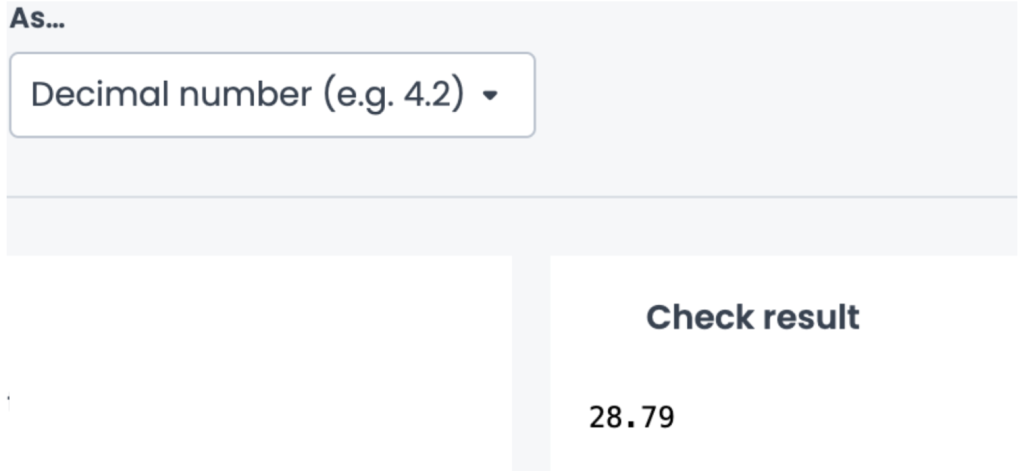

Let’s look at a specific example to make things a bit more concrete. Imagine you need to scrape the price for all product items on a site. In my first rule, I’m using XPath and you can see that I’m pulling the raw data to verify that I’m picking up the pricing element:

I’ll add a second rule to clean the export to only focus on the price value, then use Regex as my third rule to only capture the digits and non-digit value in this case, the price: (\d+\D+\d+).

The flexibility to use both methods makes adding scraping rules more efficient and gives you more options on data to scrape and combine in order to find more insights depending on your industry.

I’m using price for this example, but you can consider scraping for the publication and modified date for articles, or the stock availability on PDP’s, or the breadcrumbs to better visualize your crawled pages with segmentations.

Instant filters with a keyboard shortcut

One of the smallest yet powerful time-savers in the platform is the instant filter feature using a simple keyboard shortcut. Whether you’re deep into a crawl report or casually browsing a weekly crawl, the instant filter helps you focus your analysis on what’s caught your eye.

Picture this, you’re reviewing a crawl report and spot a spike in non-indexable pages due to a meta robots tag. You want to investigate further and see how this reflects on the other charts.

Instead of manually applying filters in the data explorer, you can simply hold Option (Mac) or Alt (Windows) and click on the segment you want to highlight. Instantly, every chart in the dashboard updates to reflect that exact filter.

Why is this useful?

- Faster insights: Explore patterns across multiple charts without leaving the report.

- Focused topic tracking: Highlight issues like indexability, performance, or crawl frequency with one simple click.

- Consistent filtering: No need to manually build the filter, so nothing gets missed.

Log alerts /notifications

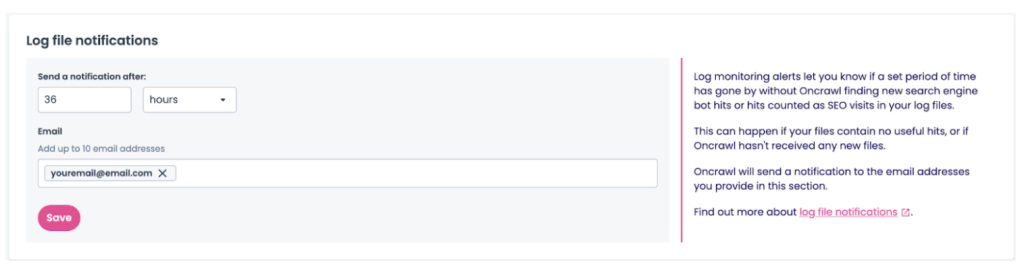

Easily overlooked but crucial when monitoring your log files, enabling log alerting gives you the option to add up to 10 email addresses to be notified if there are no recent or usable log files deposited into your project.

Stumbling upon an issue with your log files can be a painful discovery and result in a long turnaround before it’s resolved. Using log file notifications, set it and forget. Set the amount of time after an issue arises when you should be notified and you’ll be emailed if there hasn’t been recent deposits, or if what is deposited isn’t considered useful.

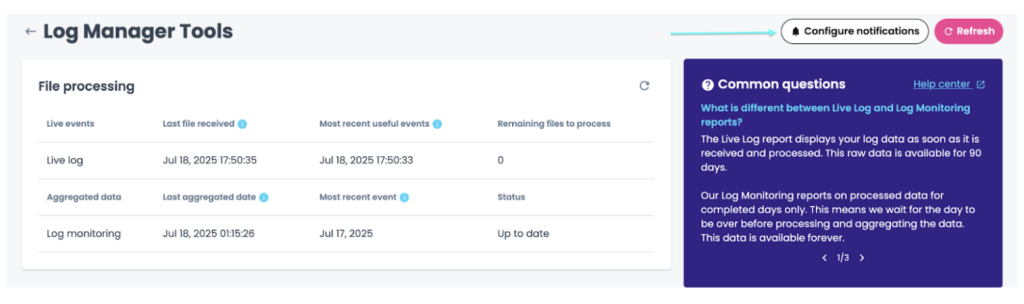

To set it up, head into your project which has the log monitoring feature enabled and click on the Log Manager Tools. From there click on, Configure notifications.

At this point, you’ll be able to enable the notifications and define a threshold for when you should be contacted if your logs need attention.

This can be time saving in uncovering what exactly has gone wrong with some deposits, which gets you that much closer to having up-to-date log reports sooner.

Log manager tools

While on the topic of logs, it seems fitting to mention that the Log Manager Tools report is another great area of the platform that isn’t utilized as often as it could be. It’s a nice little hub tucked away in the project level which is composed of the details related to each log file deposited.

Not only are you going to see the exact files that have been deposited, you’ll see the exact date and time of each deposit, the break down of each type of log line (OK, filtered, erroneous), a graph that monitors that amount of fake bot hits detected per date of deposit, and you can see a breakdown of the quality of the logs deposited and the distribution of useful lines.

It’s a great place to check on the quality of the file deposits. For example this could mean making sure the files are compressed, verifying what is being deposited is SEO related (as in organic visits and bot hits) and checking the frequency of the deposits.

If you start to notice anything odd with your log reports, a great place to start your investigation is in the Log Manager Tools. You might discover that the log line format has changed, the parser needs to be updated, or maybe that the bucket name has changed and you need to send us new credentials. Whatever the case may be, you can always take a look and reach out to us if you need help diving a bit deeper.

OQL filter on dashboards

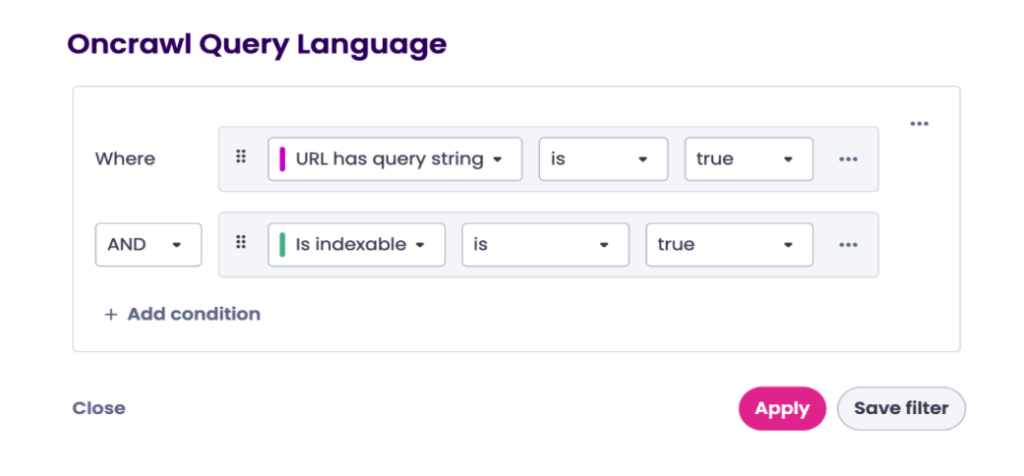

Nested next to the segmentation drop down lives the ever convenient OQL filter that deserves a highlight. When used, it adds a level of convenience to your crawl report checks. OQL filter, referring to Oncrawl Query Language, is a simple way of filtering through your Oncrawl data.

For example, if you’re trying to find every link pointing to 404’s, you can use this OQL filter in Oncrawl.

It’s how you unlock all the hidden gems while deep diving through your crawl data. While you can directly use the OQL in the data explorer, you actually have the option to use it and apply it directly on all the dashboards within your project.

Similar to how segmentation lets you bucket the crawled pages into groups to visualize on a graph, the OQL filter is a dynamic feature that lets you focus only on the URLs that you’re prioritizing within that group.

Let’s take a look at a use case to see how it can be useful. If you’re looking at a recent crawl report and you want to check only the pages that are parameterized but are indexable, you’ll first want to click on Filter.

Then select the OQL filter to visualize URLs that have a query string and are considered indexable.

This will provide you with the number of pages that are considered query strings that are also indexable so you can see if the management strategy is correct or if something needs to be adjusted.

Applying OQL filters directly to dashboards gives you:

- Faster troubleshooting, narrowing down specific URLs.

- A more targeted analysis, filtering out URLs that might be creating noise.

- A custom option when reviewing the dashboards.

Whether you’re looking at the big picture or digging into the details, these underrated features might become your new favorites.