Why use advanced segmentation?

Segmenting your data allows you to highlight specific issues and also allows you to focus on specific samples of page sets. It is often used as an additional angle of view to analyze your data, as opposed to the classic view based on URL structures. Erlé wrote an excellent article about using advanced segmentations.

Today, we will focus on the most relevant segmentations, intended to illustrate the state-of-the-art and to highlight optimizations you’ve implemented. These are ideal segmentations to use when creating reports for C-Level readers. Despite the breadth of results according to your industry and volume URLs, SEO needs are universal: crystal clear explanations of actions undertaken, in terms that are easily understood and applied by others.

Using accurate data to build strong and transparent reports

Applying segmentation to your log data is the best way to create a benchmark of your current SEO strategy. In terms of reporting, it allows you to easily demonstrate Google’s behavior on your website.

Note: the screenshots used in this article come from websites for different industries. However, all of them contain several million URLs.

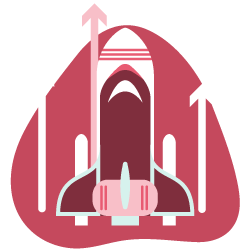

The first use case consists of identifying URLs that can easily be established as useful and necessary for Google to crawl (“Crawl efficient” in the image below) as well as URLs with little added value, outside the scope of your actions (“Crawl waste” in the image).

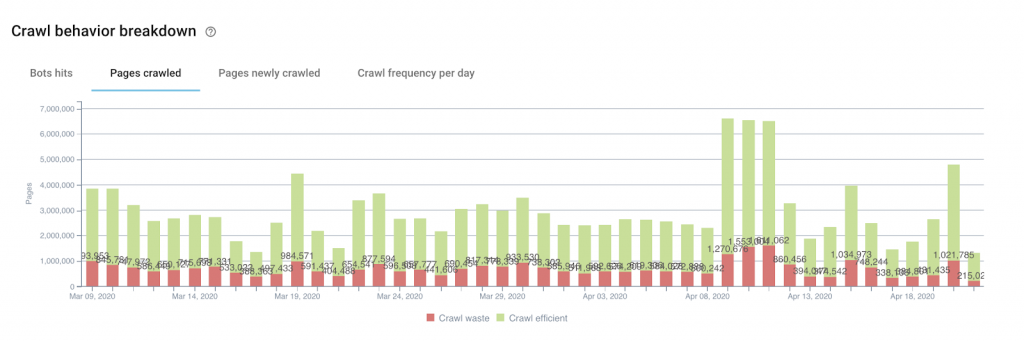

We can also use the same segmentation logic on the number of bots hits to illustrate the same issue in number of daily crawl hits (the same page can therefore receive several visits from Googlebot):

How to build this type of segmentation

Each website has its own particularities, but the easiest way to segment URLs is based on a URL pattern. Some URLs, identifiable by their structure, represent your money pages while others have a lower or even counterproductive interest for your performance. This is the first and easiest way to build such a segmentation.

The crawl waste can be identified using patterns for the following types of URLs:

- Pagination

- URLs with parameters

- Internal search results

- Incorrectly structured URLs / wrong encoding

- Non-canonical URLs

- Single or multiple facets

- URLs with specific IDs

Building this kind of segmentation often requires a lot of effort, especially when the number of URLs is huge, meaning it requires a significant amount of time. Take this into account and set up this sort of segmentation as early as possible in order to be able to draw up an initial benchmark of the situation on your website based on real data. This will allow you to be able to compare apples to apples later on.

The advantage of this type of segmentation use is based on the fact that the data come directly from Google’s actions, transcribed in the log files. The data does not lie: it is the perfect reflection of reality. (…if, of course, your log files are complete. But that’s another subject! We’ll talk about it later.)

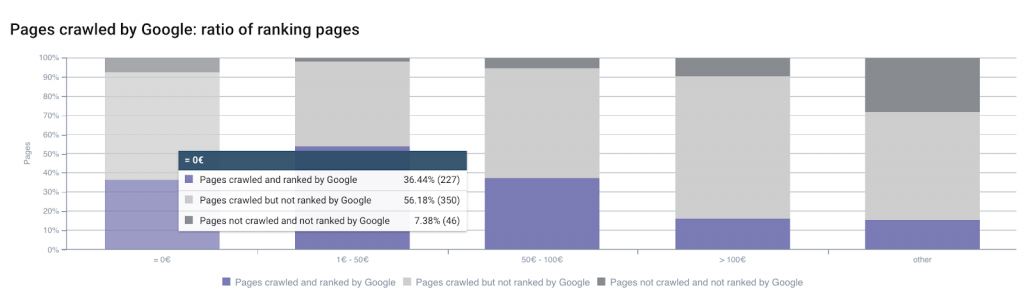

We also use another type of segmentation that you can use to easily demonstrate, with relation to the value of the page (you can calculate this as: (E-commerce turnover + Total value of the target) / Number of unique visits to the page in question), the following two elements:

- The level of criticality of an SEO issue.

- The importance of a batch of optimizations to be made on a specific sample of pages.

Which data to use

The first thing to do is to import the data from Analytics by retrieving the value of the page for each URL that is displayed after you apply the filter “Traffic from natural results”.

To do this, navigate to:

- Behavior

- All pages

- Apply the filter: Results from natural traffic

This allows you to obtain an export of URLs for the period you are analyzing.

This segmentation has the advantage of relying on business data, which is perfect for reporting. If SEO has deservedly been called an inaccurate science, you can still rely on this data to support your remarks when it comes to arguing for an optimization strategy.

Simplify your reporting with segmentation

These segmentations, though they sometimes take a long time to set up, have the power to simplify your reporting:

- The first segmentation we looked at is based on a simple idea: is the passage of Google on this page beneficial in relation to the objective of traffic acquisition?

- The second allows you to answer the following question: can I hope to increase the value of my page by correcting this kind of problem?*

These segmentations are also easy to explain because they are based on tangible and concrete elements.

During a first audit for example, they will allow you to draw up a first look at the state of things in just a few minutes!

* Of course, there is no guarantee that the value of a page will be increased by correcting a particular technical element, but the analysis of the ranking factors (in Oncrawl’s Ranking report) allows a better understanding of how the different positioning signals are weighted in relation to the historical data for your site.