One of the biggest challenges for many sites today is managing their massive quantity of content.

Contrary to the early years of the web, most “big” websites are now reaching a certain digital maturity and often have an increasingly extensive collection of content. It is not uncommon to deal with sites that have several hundred or thousands of pages… and that means content!

The goal here is to find out which of the existing pages are the best candidates for content optimization. Among this mass of content, how should you prioritize? How should you direct your efforts (and your budget) to obtain the greatest advantage?

That’s where Oncrawl comes in: Oncrawl will make it easier for us to segment, sort and prioritize our content library to make strategic choices based on tangible data.

Optimize your existing content with Oncrawl

Let’s take a concrete example: we have a site with several thousand pages and have been publishing articles regularly for several years, but we have no idea of the volume of pages or performance. The company’s goal is to get an understanding of the current SEO performance of the existing articles and to generate more organic traffic with them.

In order for this analysis to be relevant, an important first step is to develop a segmentation strategy in line with the company’s goals and the situation.

Here is the crawl of a site:

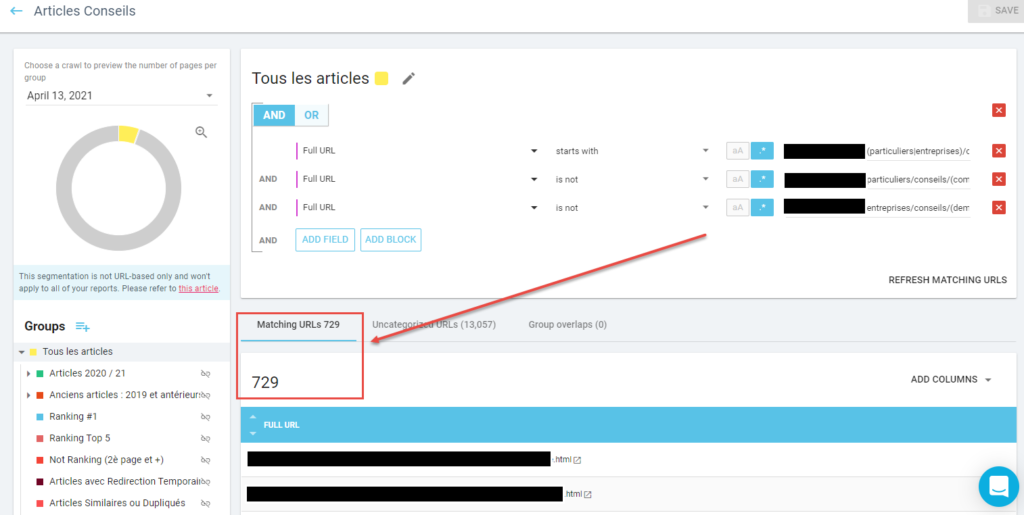

In this set of pages, we are going to isolate our “Articles” section in order to only concentrate on data related to this section. To do this, we’ll select the “Tous les articles” (“All articles”) segmentation we created for this purpose in Oncrawl:

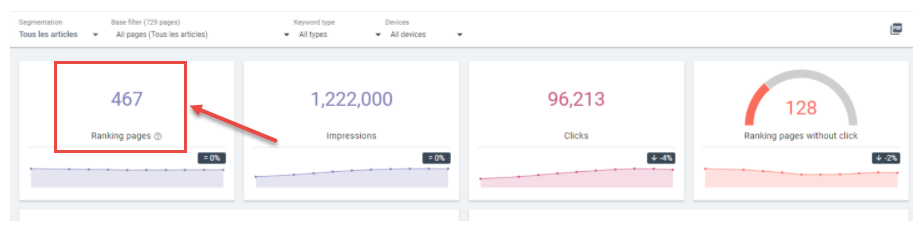

The “Ranking Report” in the crawl results will allow us to get an initial overview of the performance of our 729 articles:

At first sight, only 467 articles are ranked by search engines:

We can also create subgroups in our segmentations to refine our analysis. In this case, we’ve grouped articles by publication date:

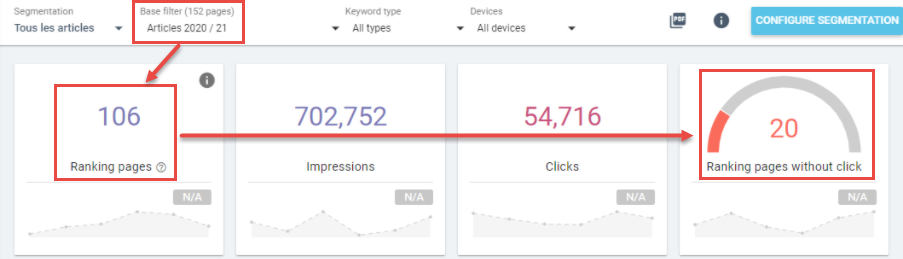

- Recent articles (2021 and 2020):

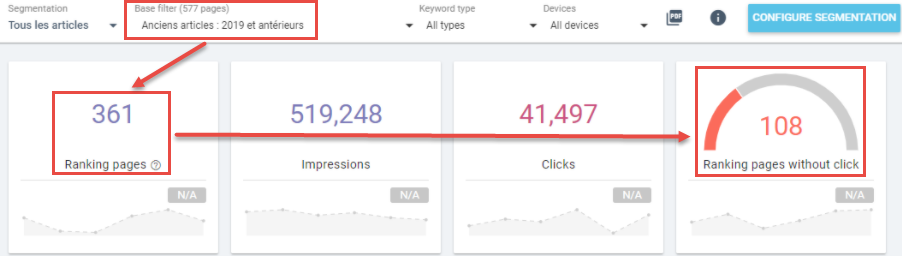

- Older articles (2019 and earlier):

We already have some significant observations:

- Of the 729 articles, we can see that 128 (20 + 108) do not receive any clicks from search engine results pages.

- We can also see that the articles in the ”2020 / 21″ group generate more traffic, although they are clearly less numerous than those in the ”2019 and earlier” group.

We can therefore assume that it is better to concentrate our efforts on the older articles.

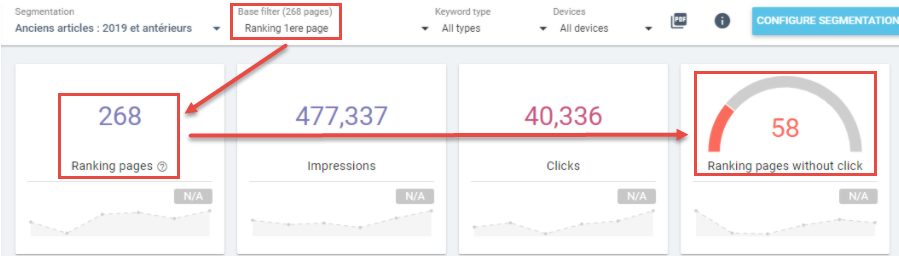

Oncrawl will allow us to create multiple filters that can be applied to to our segmentation to refine our analysis, such as isolating the articles that are on the 1st page of results:

We now know that of the 358 articles published in 2019 or earlier, 268 ranked on the 1st page. Of these, 58 received no organic visits.

Here, multiple options are available, but if we want to be cost- and time-efficient, we could:

- remove and redirect the articles that don’t receive visits

- dig deeper into the optimization of articles that are already receiving clicks.

This example shows us the importance of having a clear picture of the current SEO performance of pages in the the site section we are interested in: this allows us to draw relevant and actionable insights.

Your brain is your best asset for this and Oncrawl is your best ally because it allows you to define one or more precise segmentations that can be customized to each particular case!

[Case Study] Driving growth in new markets with on-page SEO

Analyze the content-based factors influencing your site’s performance

For this example, we are going to focus on the segmentation that we created for the example above, namely the 268 articles published prior to 2019 that rank on the 1st page of Google.

The goal here is to analyze the factors that influence the SEO performance of these articles in order to determine priority pages later on.

It is important to note that each industry is different, and even if some of these factors appear to be the same, there are nuances according to each industry. It is essential to adapt the factors you examine based on your industry and your site. We will be able to base our analysis on data specific to our site thanks to Oncrawl.

1. The factors related to visible content on the site (on-page)

The content on a page is obviously an essential factor, both in terms of quality and quantity.

In order to analyze the role these factors play for the content on the pages, we will take a closer look at the “Ranking factors” report of Oncrawl.

Quantity:

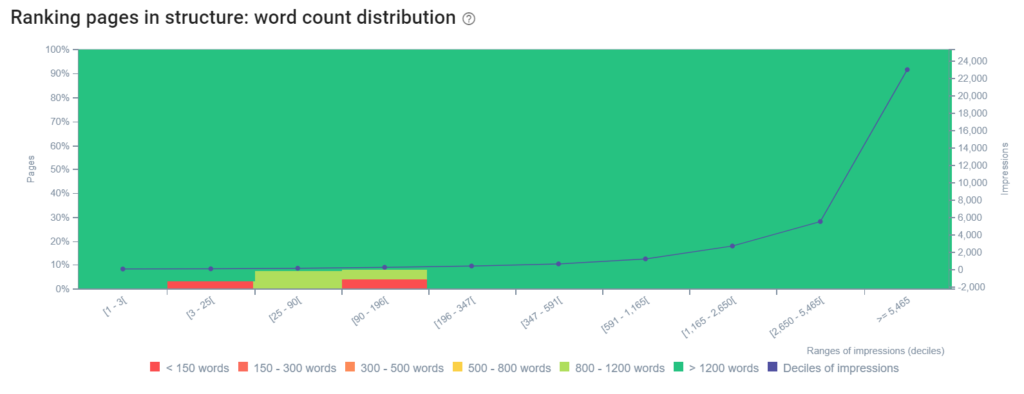

In order to rank in the Google search results pages, for these different queries, it is essential that the content of your article be the right length. There is no optimal length, but there is a correlation between the length of a text and the performance of a page.

If we take a closer look at the data on our site, we can see that the vast majority of the articles on the site are made up of more than 1,200 words, but that a few pages have fewer words. These pages all generate few impressions. By creating more content with long-tail keywords and providing more context, we could possibly improve the impressions of these articles, and therefore, generate more organic traffic.

Quality:

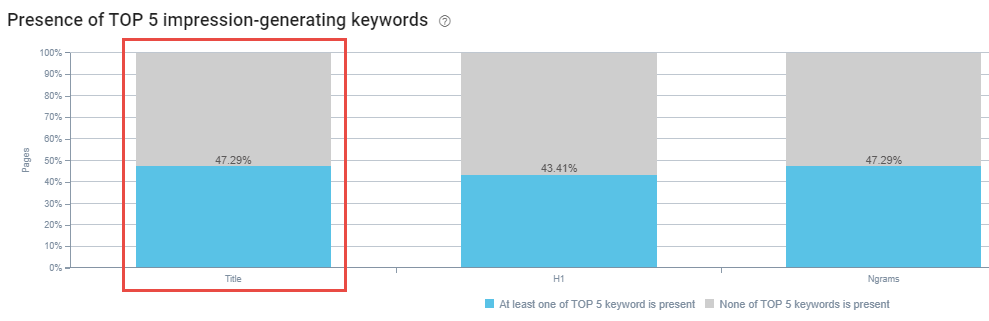

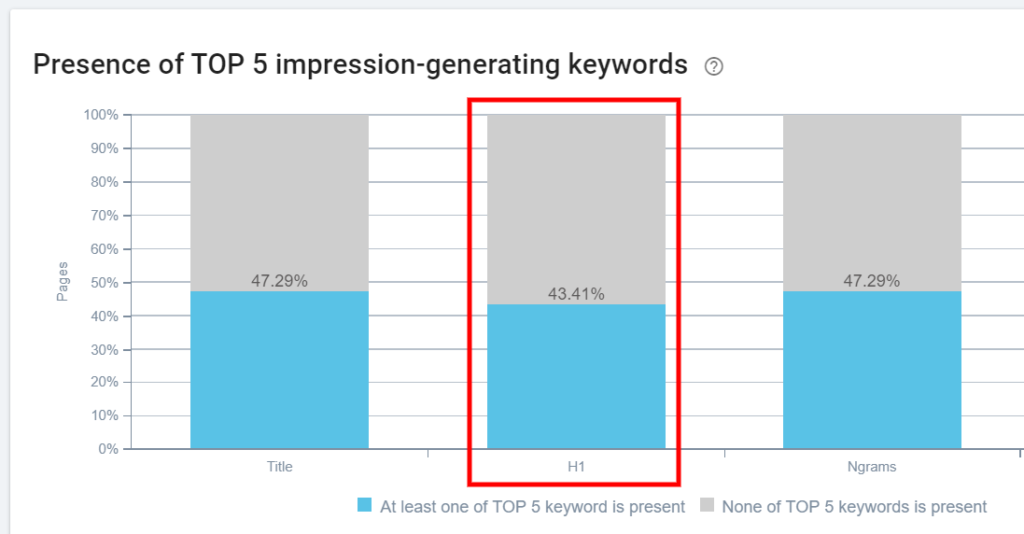

Hn tags are important to help provide context to Google with regard to the subject matter. They are what will structure the content of your page, like a table of contents in a way. The most important is the H1 tag, which should be the title of your page. It is essential to optimize it accordingly by including one or more keywords for which your article ranks.

We can see on our site that 43.41% of our H1 tags do not contain at least one of the top 5 keywords in terms of impressions for the page. This gives us a significant number of pages on which the H1 tag could be optimized.

2. The factors related to content visible on Google SERPs (meta tags)

Apart from factors purely related to the visible content on a page, some of the main (and oldest!) SEO elements for a page are in the part of the source code, namely the meta title and meta description tags.

While the meta title tag will have a direct impact on the ranking of a page in relation to a given query, the latter will indirectly impact performance: the meta description acts as an ‘incentive to click’, and will allow you to capitalize on your ranking by ‘making the user want’ to click on your link, and then transform this visibility into a visit… or even a lead!

It is in this context that the “Ranking factors” report from Oncrawl will be useful to help you easily discover different optimization options for articles on the 1st page of the SERPs. These are generally quick wins to improve traffic without having to rewrite the article.

- Optimize meta titles to improve organic visibility:

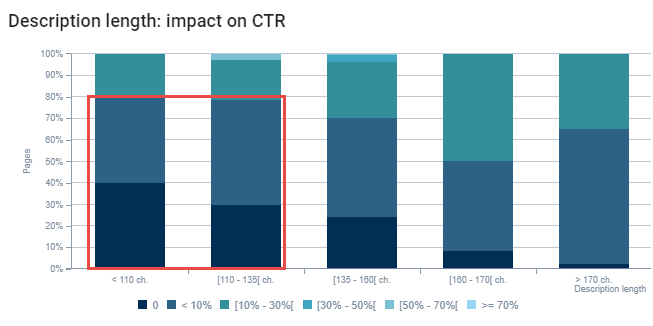

The first bar of the graph shows us that more than 47% of the title tags of our articles do not contain one of the 5 main keywords for which the page is ranked. - Optimize the meta description to improve the CTR (click through rate):

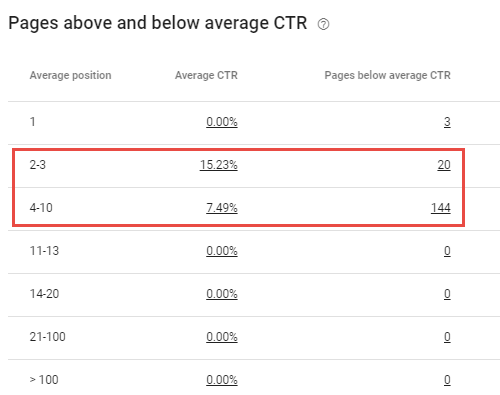

In this second optimization step, we will focus on the pages visible on the first page of the SERPs whose click through rate (CTR) is lower than the average of other similar pages:

In our example (URLs on the first page), the graph clearly shows a correlation between the length of the meta descriptions and the click-through rate.

Retrieving a list of relevant URLs by combining data using the Data Explorer

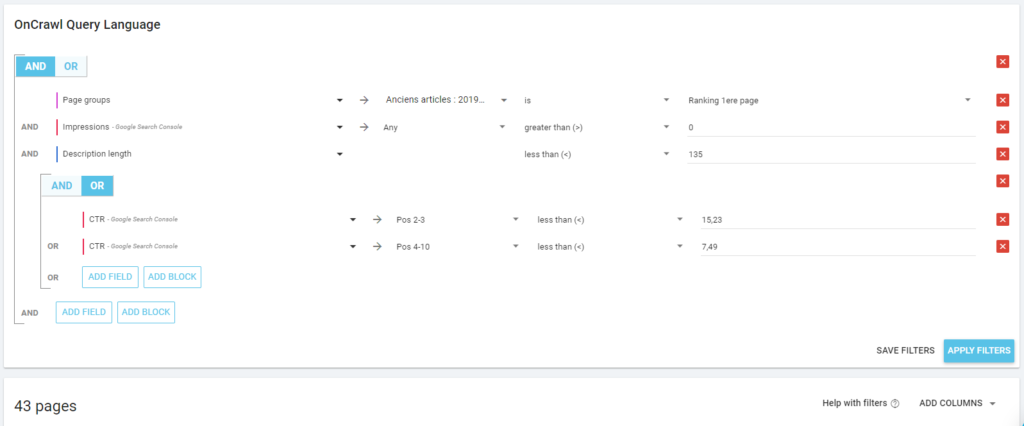

Thanks to Oncrawl’s “Ranking factors” report, we were able to identify SEO performance factors specific to our site. In order to combine certain correlating factors, we will use the Oncrawl Data explorer which will allow us to identify the most relevant pages.

As an example, let’s consider the optimization of meta descriptions to improve the CTR. We have two criteria to take into account: their length and their CTR according to their ranking.

By establishing the criteria following our analysis, we can deduce that there are 43 pages whose meta descriptions should be optimized to potentially improve their CTR.

However, let’s imagine that we can only optimize about 10 meta descriptions due to lack of time. In this case, we can continue to add criteria to zero in on the pages that might interest us the most. Here, for example, we can choose the pages that generate more than 500 impressions, that is, pages that can have the most return on investment if the CTR is improved.

So we just need to add the impression criterion to get a list of 12 URLs.

Of course, this is only an example. It is up to you to decide on the relevant criteria based on your general analysis. To do this, interpreting Oncrawl’s ”Ranking factors”, based on your own needs and criteria, will allow you to highlight the page to optimize, and to prioritize them according to the available time or budget.

Going further: Giving value to selected pages

The criteria you will use to focus on pages are diverse and will vary depending on each project/client: time, resources and effort required to carry out the implementation.

In addition to these purely SEO criteria, giving a dollar value to these pages and actions is often the argument that will allow management to green-light a project.

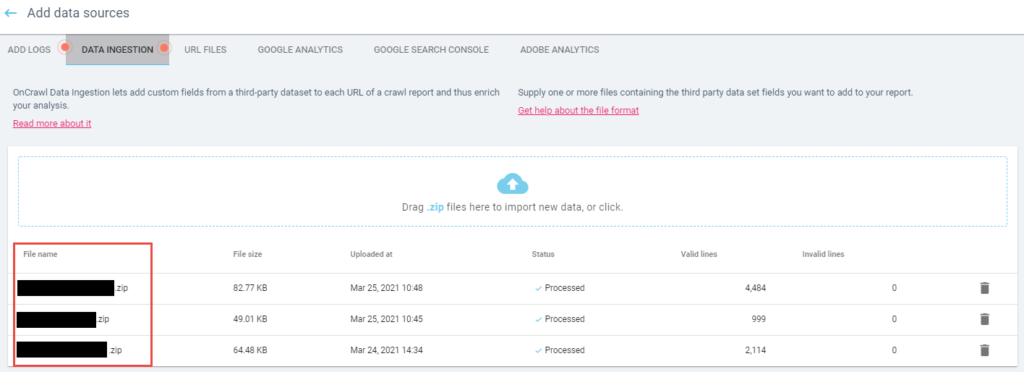

For this, the “Data Ingest” function – which you will need to activate during crawls – allows you to integrate and cross-reference data from your favorite SEO tools to improve insights: search volume and CPC of keywords, traffic value, number of backlinks and authority…

Depending on the type of site (e-commerce, informational, etc.) and its objective (transactional, lead generation, etc.) integrating additional metrics such as time spent per page or conversion rate can also be relevant.

The benefits of an SEO platform for content projects on large sites

In the word of SEO tools, Oncrawl is a real Swiss army knife that allows you to make precise decisions that are adapted to the context of each site/client, which is particularly useful when considering large projects… such as optimizing a content strategy!

Rewriting an article, deleting a page, or simply reviewing a title or meta tags?

One of the strengths of the platform is its “all in one” aspect: by allowing you to centralize and combine on- or off-site, third-party, technical, quantitative and qualitative metrics, Oncrawl keeps you from having to manipulate and export multiple spreadsheets. This limits the risk of human error, and it is easier to understand the data and choose the actions to take.

This gain in efficiency is an additional weapon for SEO: rather than applying a global and therefore costly strategy, being able to choose targeted actions will usually offer a much better return on investment … with growth that will be easy to track, crawl after crawl.

Request your custom demo

Written by:

Fabian Neuville – SEO specialist at Adviso

Pierre Jean Bertrand – SEO specialist at Adviso