What is Searchdexing (or Search Indexing)?

Searchdexing is a technique that allows pages of an internal search engine to be indexed. Usually, this should not be done unless the process is well-understood and has been planned as a means to improve SEO.

Indexing your result pages from an internal search engine can have big consequences for your SEO, in the same way as if you index pages from your faceted filter engine. If this is not done properly, you end up with a huge amount of indexed pages that have thin content or duplicate content with other pages in your site structure which were designed to convert.

If it is done well, however, this method will allow you to rank for long-tail queries.

How to set up Searchdexing

First of all, it is important to remember that it takes a relatively large website (e-commerce or media sites are often good candidates) for searchdexing to be effective. If you have a showcase site of 20 pages, this strategy may not be very relevant.

In addition, you need to have an internal search engine that is used by users.

![]() Search bar (Fnac.com)

Search bar (Fnac.com)

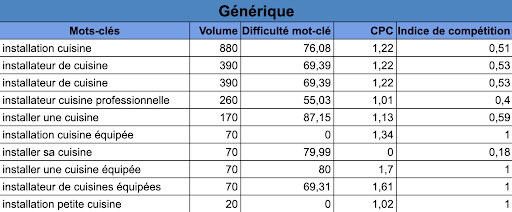

The first thing to do is to extract the queries entered by users on your search engine. Then, perform an analysis to eliminate duplicates and syntax errors.

In a second step, it would be interesting to blend this extraction with a lexical and/or business analysis. The important thing is to focus on the queries that generate either searches or business for you. Be careful not to cannibalize the pages of your structure that already exist!

Lexical study following an export of the internal search engine

Lexical study following an export of the internal search engine

Start with a test on a dozen or a hundred pages, no more. The important thing is to set up a first version that works, even if it is not automatic and requires manual set-up.

Internal linking and linking blocks

The goal of searchdexing is, of course, to create pages that can respond to a real demand from your internet users, but you should also take advantage of searchdexing to optimize your internal linking structure!

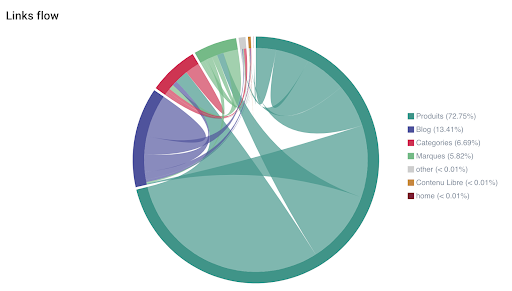

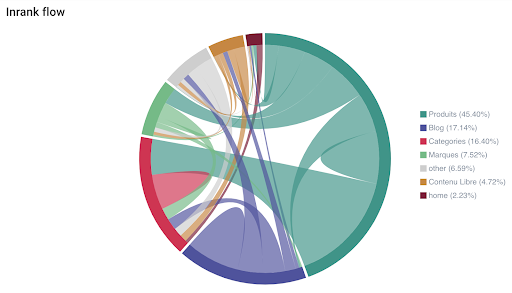

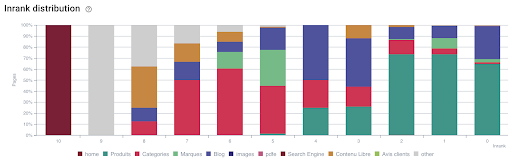

A first analysis of your internal linking should be carried out. For this, Oncrawl offers two important metrics to keep in mind: Inrank, and the Links flow and Inrank flow graphs. They allow a precise analysis of internal linking and transmission of popularity between the different categories of your site. Moreover, since segmentations can be created, modified and applied to both future and pre-existing crawls, you can analyze this from any point of view (site categories, pages that convert, pages that generate sales, pages visited by Googlebot, etc.).

Once you have understood this notion of internal linking and popularity, you can start building searchdexing pages. There is no established way to do this, but here’s a suggestion.

Start by creating a page listing all your searchdexing pages. This will allow Google to crawl and index them faster. You can place this page in the footer or in a part of your menu.

Footer block searchdexing (Fnac.com)

Footer block searchdexing (Fnac.com)

As far the construction of your URLs is concerned, it can be worthwhile to be able to identify them easily by adding, for example, a /sd/ directory to the URL. This will allow you to analyze the impact of these pages on SEO.

Also, set up a “Tags” system if possible. It allows you to insert blocks of Searchdexing links in category pages that cover a closely related semantic field. For example, do not put a link to a page of decorative items on pages that talk about mattresses!

Searchdexing linking blocks

The block of searchdexing links can be placed on pages with identical or compatible tags.

This block of links can be shown in a simple form for a first version. For example, a H2 title and links with anchors in H3.

As Inrank and internal linking analysis can be done upstream, it’s best to target pages with a good Inrank. For that, I suggest that you use Inrank distribution graph on Oncrawl, which cross-analyzes Inrank data with the active segmentation:

Inrank distribution by page group (Oncrawl)

Inrank distribution by page group (Oncrawl)

Once again, it is possible, using Data Ingestion, to cross-analyze Inrank data with any external data, such as conversions, turnover, margin, keywords, in short anything is possible!

If you use a dynamic footer (a footer that adapts to the current category), it is also possible to place a block of Searchdexing links in this location.

I encourage you to read this article by Guillaume Giraudet-Bacchiolelli (209 Agency) about dynamic footers.

Content optimization

The content of these new Searchdexing pages should be processed similarly to the content of your category pages. You should have an optimized block of content, ideally at the top of the page, though it shouldn’t be too large. It is also possible to separate this content into two sections: a first part at the top of the page, and another, larger part at the bottom of the page.

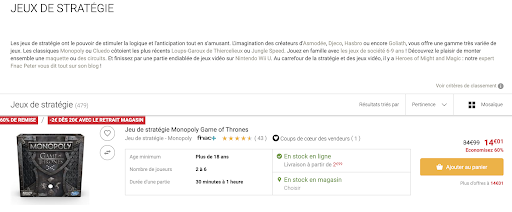

SEO content block on a Searchdexing page (Fnac.com)

SEO content block on a Searchdexing page (Fnac.com)

Try to automate the writing of your title and meta description tags. Initially, if you do a test on a few dozen pages, it is quite possible to write them manually. But to make the system scalable, consider automation.

For anchor links, keep it simple and take over the requests of the internal search engine.

Tracking indexing

Before production launch, results must be tested in preprod. Don’t forget to launch a crawl of your preprod and then of your production website. By using the Crawl over Crawl feature, it is possible to see the benefits in terms of internal linking and Inrank.

Once everything is validated in preprod, then the switch to production is possible.Use the same method: launch a crawl before and a crawl just after going online.

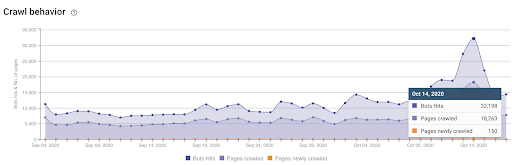

Monitoring the crawl is important to see if Oncrawl is discovering the new searchdexing pages.

Remember to update or create a new segmentation to add the searchdexing pages.

On the crawl analysis side, there are several things to monitor:

- Did Oncrawl discover these pages?

- How deep are these pages? What is the Inrank of these new pages?

- Which pages point to these pages? Are there 4xx or 3xx errors?

On the log analysis side, here are the key points:

- Analyze Googlebot hits on this page/segment template

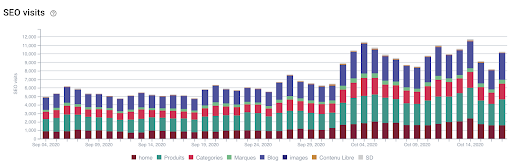

- Analyze SEO visits in logs

- Monitor the Freshrank (number of days between a Googlebot hit and an SEO visit)

- Check the crawl frequency for these pages

Googlebot monitoring (Log files)

Googlebot monitoring (Log files)

Monitoring of SEO visits (Log files)

Monitoring of SEO visits (Log files)

Of course, don’t forget to follow the indexing on Google Search Console and traffic with your analytics suite as well as the positioning of keywords with a tool like SEMRush.

If you find that Google is visiting these pages correctly, that they are generating their first SEO visits and first conversions, then you can consider a wider deployment by adding a large part of automation to make your internal search engine a fully active part of your SEO strategy.

In summary

Searchdexing should be considered for your website under certain conditions.

Indexing and ranking an internal results page can be useful if it is related to a long-tail query that generates business for your website. Indexing this page will allow it to appear in the SERPs for the query that matches the optimized content and metadata you set up specifically for this page.

This is not about massively indexing all of your search results pages: analysis of the search intents, the results from your internal search engine, a lexical study of these results, as well as the analysis of your internal linking structure will be key elements to help you choose the pages to be indexed.

In order to amplify traffic and gain additional conversions brought by the indexing of internal search pages, it will then be necessary to optimize their content.