Limited stock? News? Time-sensitive information in a saturated or competitive niche? Events? There are many cases when getting your pages ranked quickly is key. Alice Roussel, Customer Success Manager at Oncrawl, suggests looking at the amount of crawl waste in order to find out whether there are SEO actions you can take to improve how quickly your priority pages get crawled.

How to measure crawl waste with Oncrawl

Alice and our users work together to understand the types of pages on a given website that might be crawled but that shouldn’t be, or that aren’t priority pages. For this, you’ll need a crawl that includes sufficient log data.

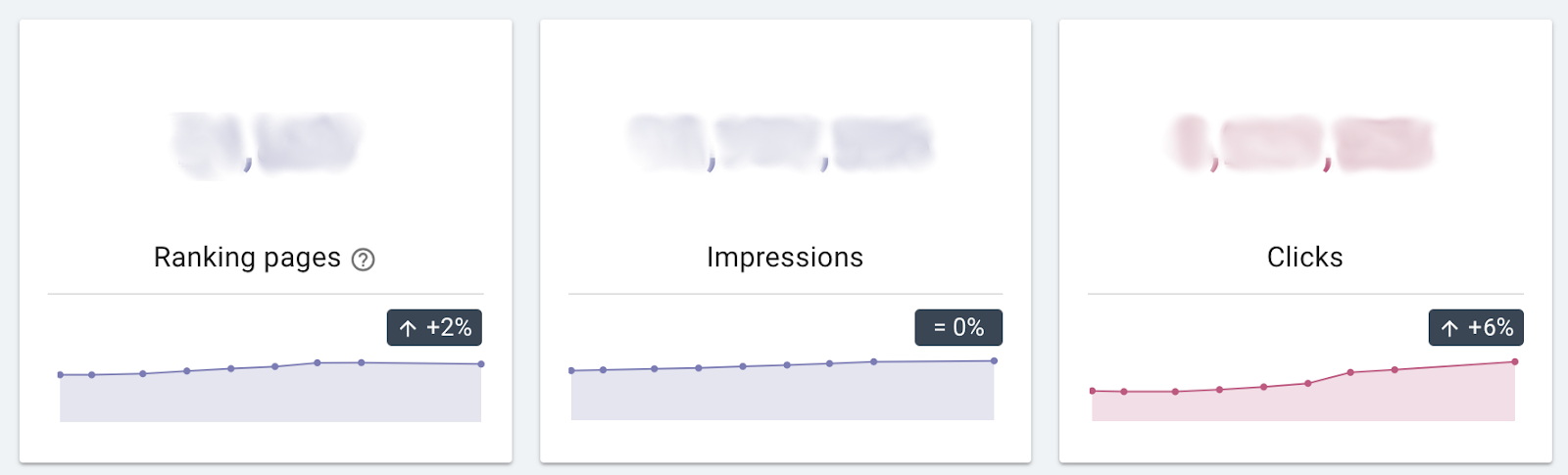

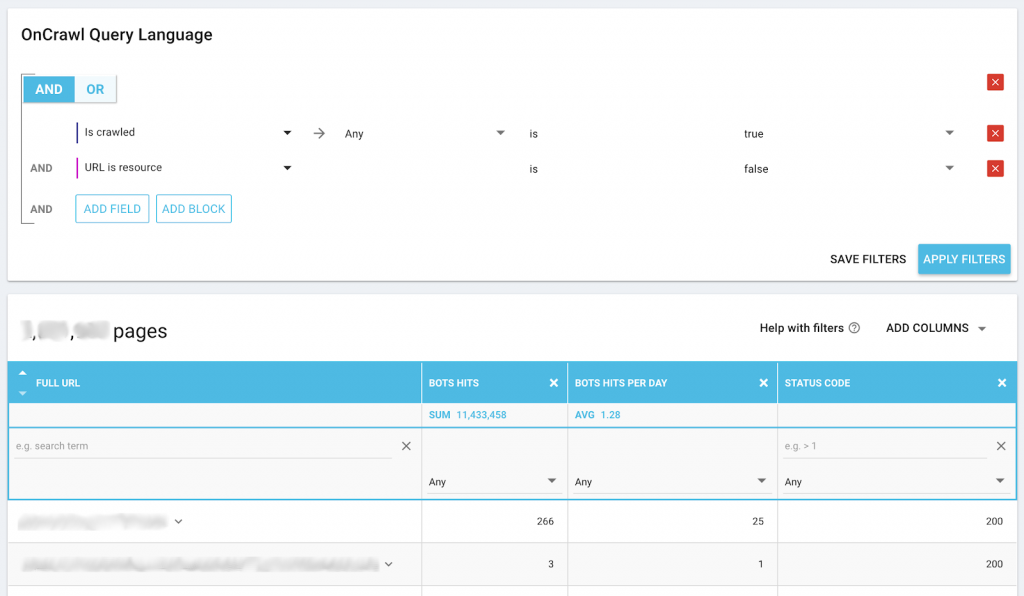

One place to start is the list of pages that have received at least one googlebot hit in the past 45 days:

Oncrawl Trick: you don’t have to take our word for the relationship between crawl behavior and revenue. You can also use data ingestion to add revenue-related data from another source–such as pages with leftover stock after sales deadlines–to push your analysis to the next level. This helps confirm hypotheses such as:

- Were product pages with leftover stock after deadlines crawled less often?

- Were they first crawled, on average, closer to the deadline than pages that sold out?

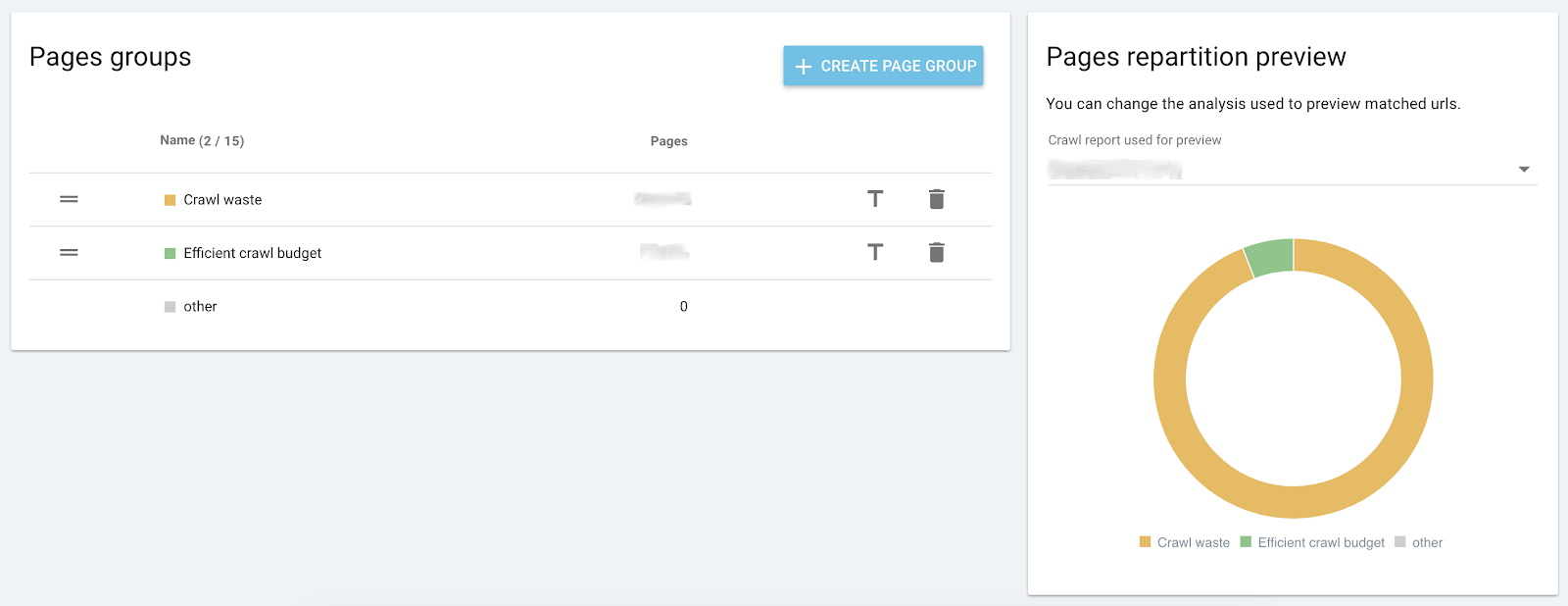

Based on the types of pages defined as pages where crawl waste occurs, Alice created a custom segmentation that splits the pages into two categories:

- Efficient crawl budget: pages that should be crawled

- Crawl waste: pages that shouldn’t be crawled, or should be crawled much less frequently

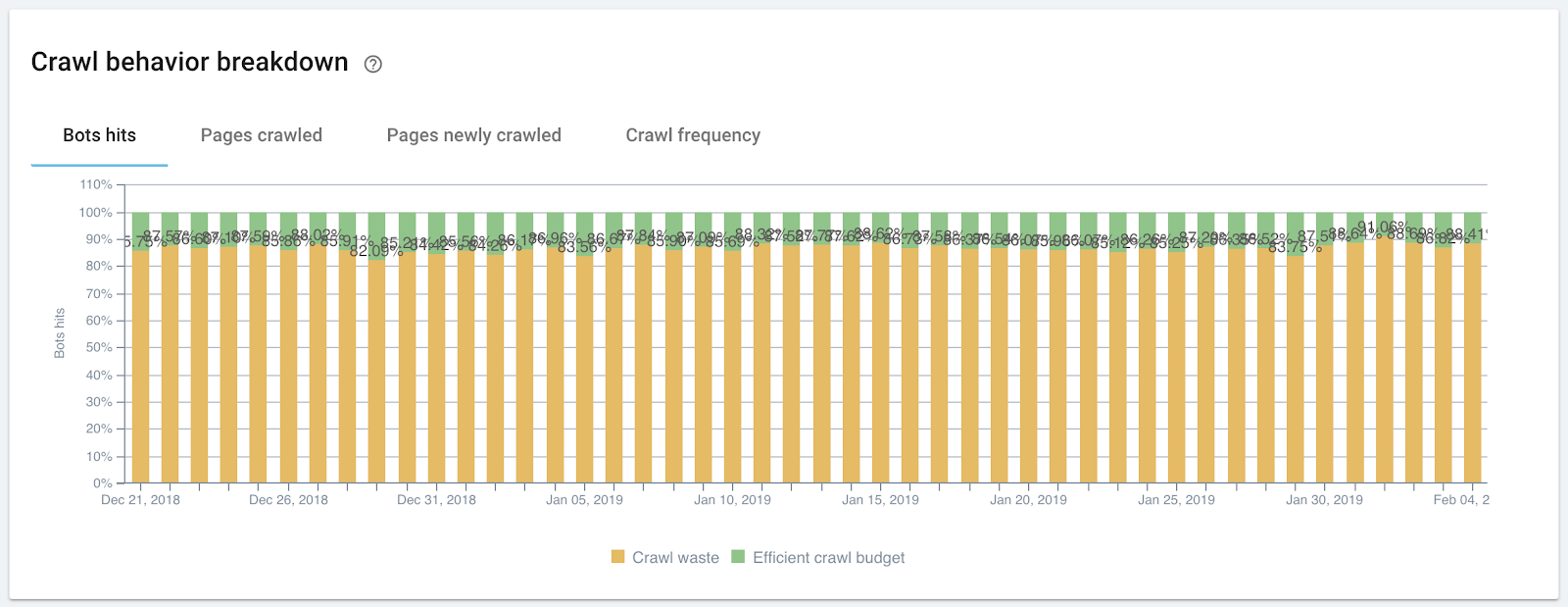

To see where your crawl budget is being spent, the Crawl behavior breakdown chart on the Crawl behavior dashboard under Crawl Monitoring is extremely useful:

For this site, we can see that approximately 90% of the crawl budget is going to pages we don’t want prioritized. The good news, though, is that this means we have 90% of our budget to redistribute.

We can also take a closer look at what type of pages cause crawl waste using another custom segmentation. This segmentation includes different types of pages that are crawled, but don’t need to be. For example, this might include the following types of pages:

- Duplicate pages with malformed URLs generated by your CMS

- Pages created automatically but not used in your site

- Pages with parameters or query strings, including site search results

- Static, low-value pages (company pages, terms and conditions…)

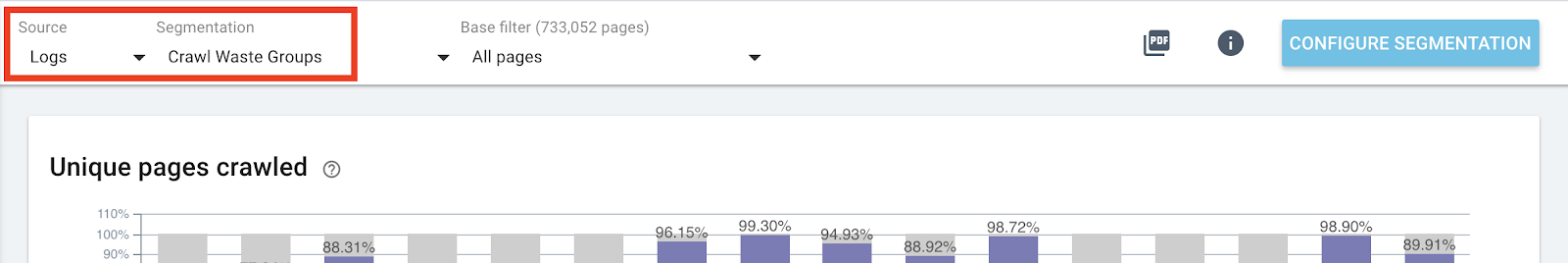

A good place to look at how crawl budget is distributed using this segmentation is on the Pages known by bot > Bots behavior dashboard in the SEO Impact report. Make sure you’re viewing this dashboard with “Logs” as the Source and that you’ve chosen your crawl waste group type segmentation.

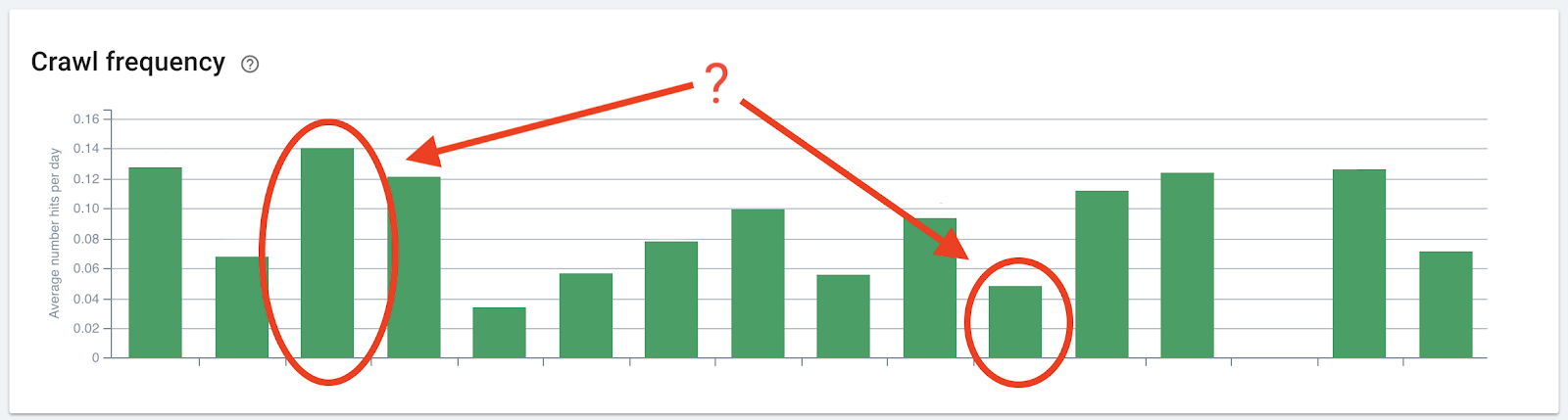

The charts “Unique pages crawled” and “Crawl frequency” show how bots interact with the pages in each group. For example, you may want to start by taking a look at wasteful groups with high frequencies, or efficient groups (if you have included them in your segmentation) with low frequencies:

Oncrawl Trick: unlike in other segmentations, these categories may overlap. This means your categories may have a large number of conflicts: pages may be counted as part of multiple groups. That’s okay: we are using this segmentation to see how pages with certain characteristics are crawled, rather than how they perform as part of a whole site. Consequently, charts that provide the percentage of site-wide activity for a group should be discounted when using this segmentation.

Results

Once you are able to identify where your crawl budget is going, you can redirect it to improve crawl rates on your revenue-generating pages. Knowing which types of URLs receive the most crawl attention helps you to target the groups with the most impact on crawl to maximize the effect of your actions.

You can redistribute crawl budget using multiple strategies, depending on the type of page and your objectives. Here are a few examples of strategies:

- Modify internal linking

- Add noindex properties

- Redirect pages

- Use canonical URLs

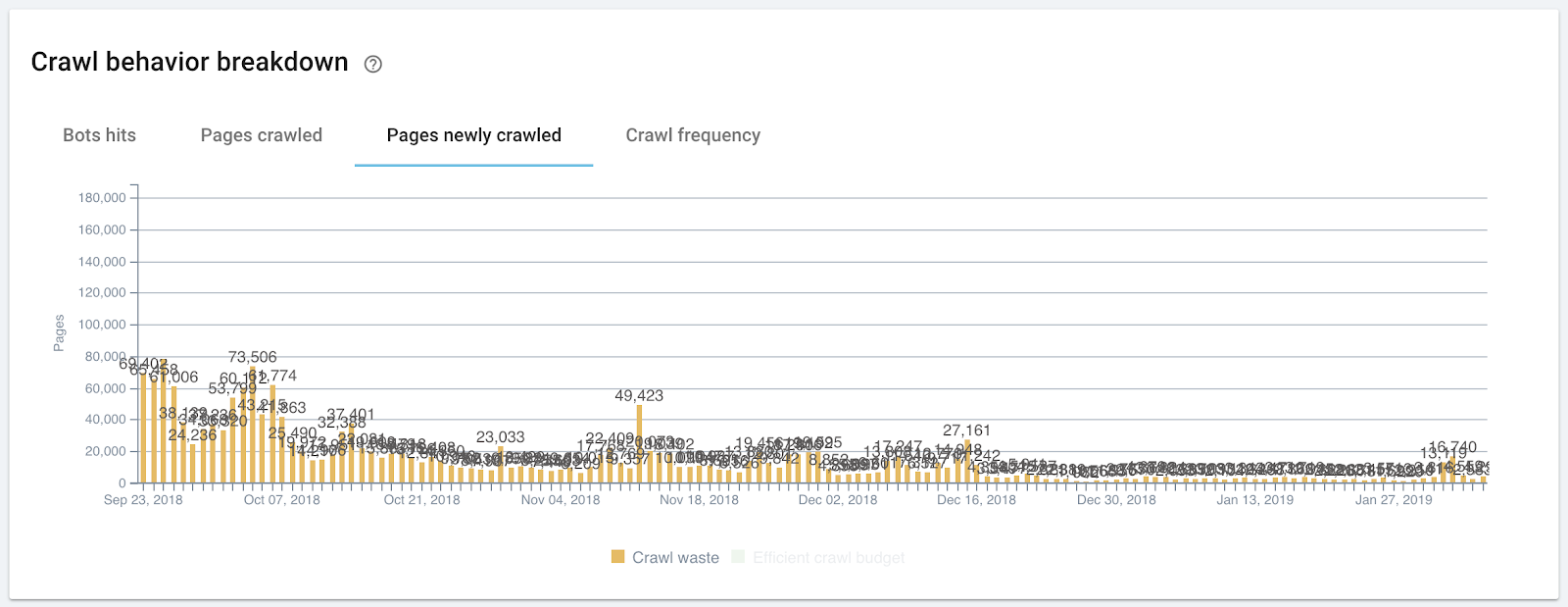

As crawl behavior on wasteful pages declines, it is re-attributed to other pages on the site. This gives more bandwidth and more attention to your revenue-generating pages, which helps them get indexed and ranked faster.