In this article I will share three Python scripts that you can use online to analyze the SERPs; from getting the most used nouns, adjectives, entities or scraping to find the most relevant headings for your articles.

I have included the scripts in Colab (a virtual device from Google that allows you to write and execute Python in your browser) so you can use them directly as a tool or see the full code to analyze it. It’s up to you!

Ok, let’s start with the first script.

Script 1: Content ideas for your clusters taken directly from Google

With this script in Python you just enter your keyword, hit play and it will scrape the SERPs, in a loop, following the related queries.

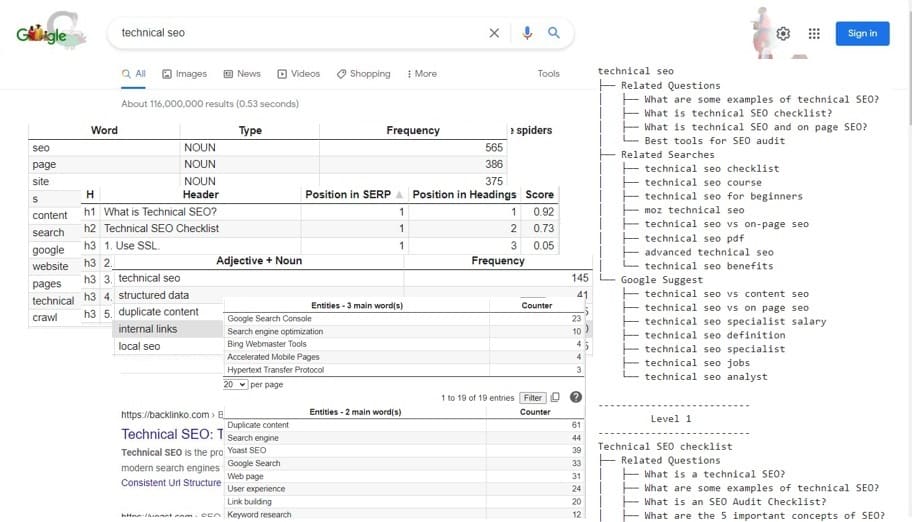

Let’s see, for example, what Google associates with the keyword ‘technical seo’:

As you can see in the image above, for a given keyword the script scrapes:

- People also ask

- Related searches

- Google suggest

Once it has finished scraping them for the main keyword, it continues, in a loop, with the related searches in order to gather the same information.

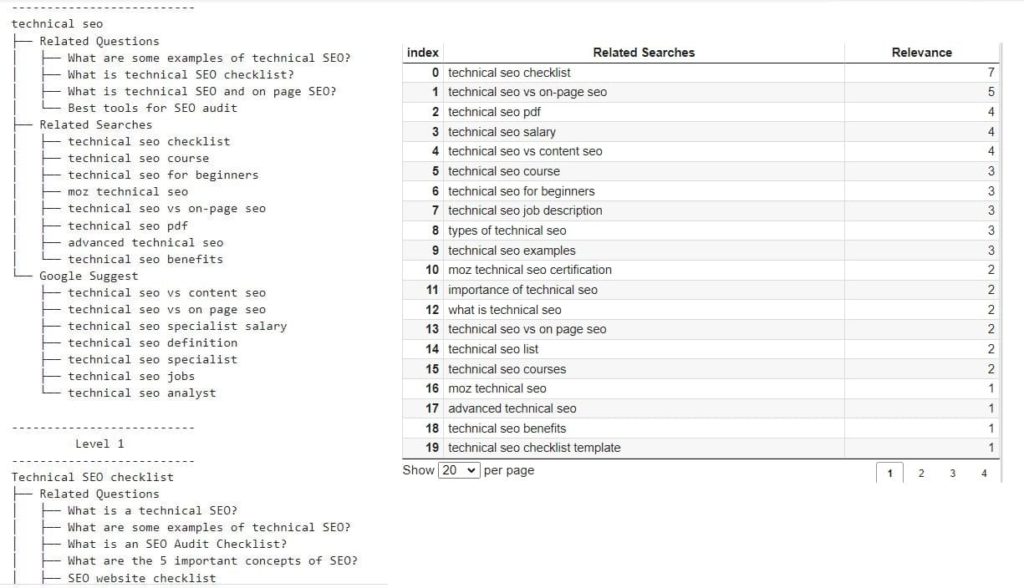

The best part is that you are getting related queries and questions that Google considers relevant for your keyword. In other words, you get a list of ideas to create a cluster, and its internal linking, courtesy of Google.

In some cases, you can use these ideas to create a separate article or you can use them directly as headings for the main article. It will depend on each case.

Optional parameters for the script

As you will see in Colab, there are some parameters that you can change for the Google search:

- Keyword, language and country for the search.

- ScrapeLevels: use two if you want to get more results. With more than that, it becomes a bit complicated.

- LoopPAA: use True if you want to follow People Also Ask in the loop instead of related queries.

In the following two scripts we will focus more on analyzing the content for your articles. You’re gonna love the next one.

[Ebook] How your internal linking scheme affects Inrank

Script 2: Scrape the results from Google to get semantic content for your articles

Time has passed since it was enough to repeat a keyword over and over again. Nowadays Google wants rich semantic content and analyzing your main competitors with this Colab script will enable you to do that.

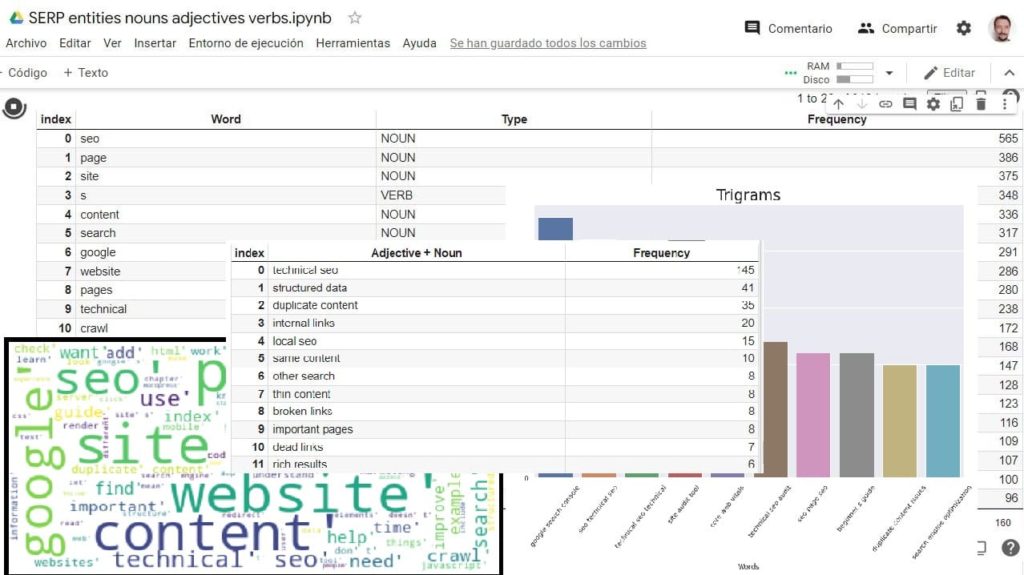

I created this script specially for content creators and copywriters. Using it, you will be able to extract the most frequently used words in the first 10 articles of the SERP:

- Nouns

- Verbs

- Adjectives

- Adjective + Noun

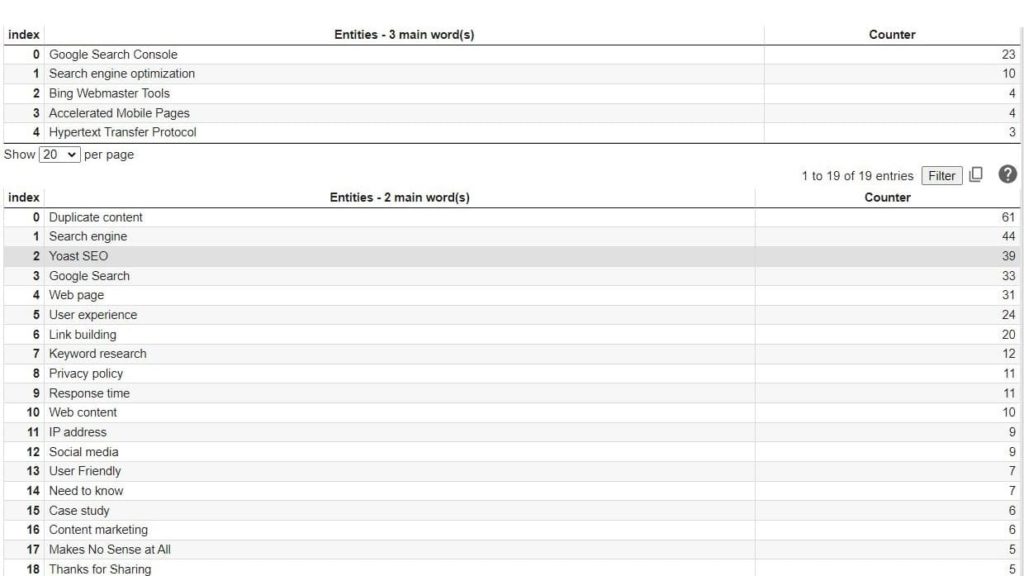

Do you want more data from the SERPs? Then the script will give you the most used entities (sourced from Wikipedia).

All this data is displayed in tables that you can filter or download in CSV format, so you can manipulate the information in Excel or analyze it online directly in the Colab.

To use the script you just have to put your keyword (e.g. keyword = “technical SEO”) and hit play.

Optional parameters for the script

- Language and country: to simulate the corresponding search in Google.

- You can also select search for entities in the SERP articles if you choose (searchEntities True or False).

Looking for entities takes around three extra minutes, but the results are worth it.

Script 3: Get the most relevant headers for your keyword

With this script you will be able to:

- Scrape the headers from Google’s primary, organic results.

- Score each heading taking into account its semantic similarity with the keyword.

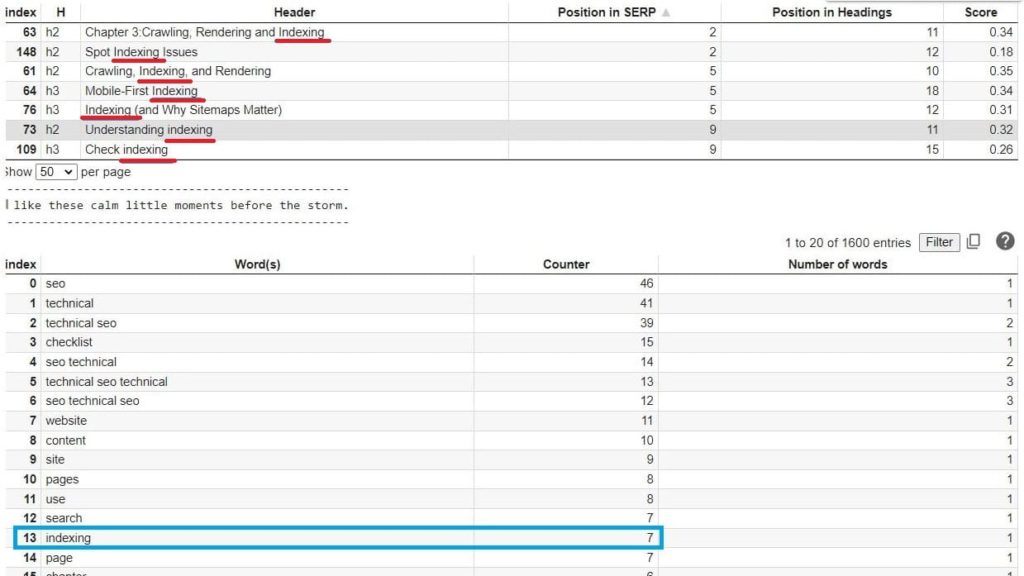

- You will get a table with scored headings and another one with most frequent words in the headers.

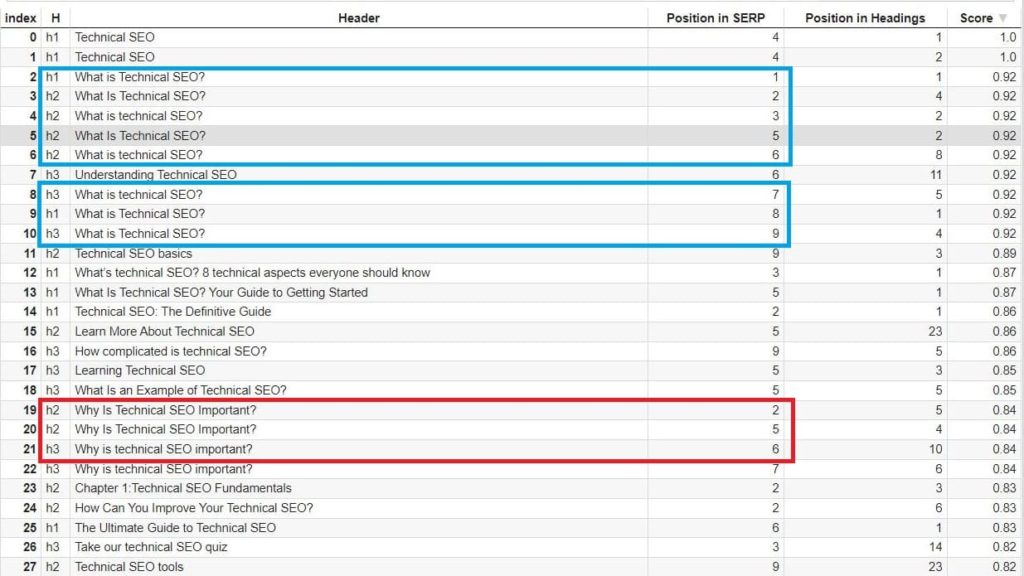

The image below shows the headers for the keyword “technical SEO”. The results are sorted by score in regards to their similarity with the keyword.

At first glance, it seems clear that we should probably include “What is technical SEO?” as an h2, and we may think about including “Why is technical SEO important?” as another h2 or even an h3.

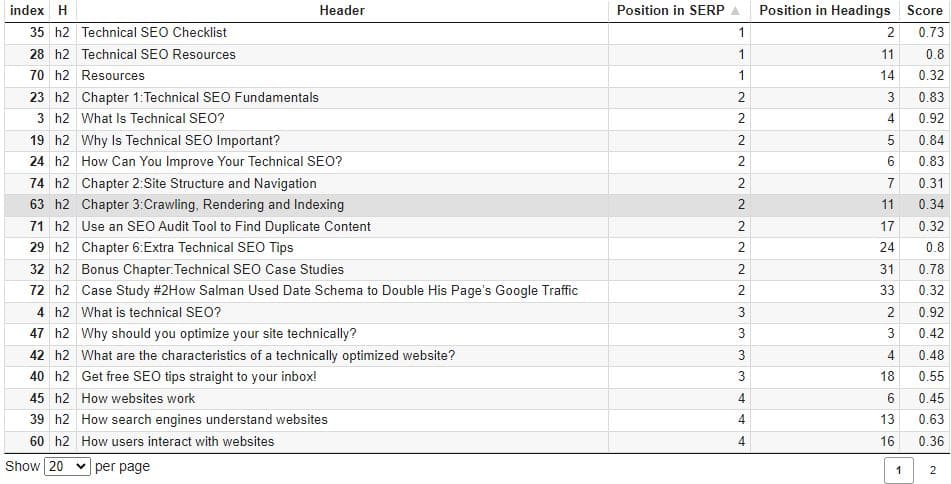

Now that we have a table with all the headings, we can play with the data in order to analyze our main competitors’ headers. To do so, you could:

- Filter by h2;

- With a score above 0.3;

- Sort the results by position in SERP and position in the article.

Also, it can be interesting to take a look at the most used words in the headings. For example, for “technical SEO” we see that “indexing” is used seven times.

And filtering by “indexing” we can see that we may benefit from using this word with crawling, rendering or even sitemap.

You can perform your SERP analysis online in the Colab or by downloading the data in a CSV file and analyzing it with Excel or Google Sheets. The choice is yours.

Optional parameters for this script

- Language or country for the search in Google.

- OptionalHeaders = [‘h1’, ‘h2’, ‘h3’] . Feel free to remove h3 or add h4

Conclusion

Google has done the hard job analyzing millions of articles and showing us the best results on the first page. We just have to take the time to analyze what’s there.

If you are not familiar with Python, I recommend you learn it. Coding in Python is like playing an instrument. First you have to learn the basics and later you can use your imagination to create whatever you want.

Combined with SERP analysis, Python is one of the best weapons we have as SEOs: together they give us superpowers.

All these Python tools will help you to rank better in Google, but don’t forget to manually examine the SERPs, otherwise you may miss relevant data like user intent, featured snippets and rich results.

If the above scripts are useful to you, consider sharing this article. I will try to publish new scripts/tools soon.

¡Un saludo desde Mallorca! 😎