At SMX Paris 2026, Maxime Guernion (Head of SEO at Havas Market) and I discussed AI crawling from the perspective of understanding what AI does with your content, noting that your data isn’t really yours anymore.

I’ve been seeing this trend emerge in log files over the past few months. For over 15 years, I’ve been analyzing server logs: billions of lines. Googlebot, Bingbot, and all their cousins. Every visit to a URL leaves a footprint on the server, revealing what search engines actually do on a site, far from what they claim to do.

Today, new players are showing up in the logs. GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, and a dozen others. They’re crawling on a massive scale, and most SEO teams don’t know exactly what they’re doing on their sites.

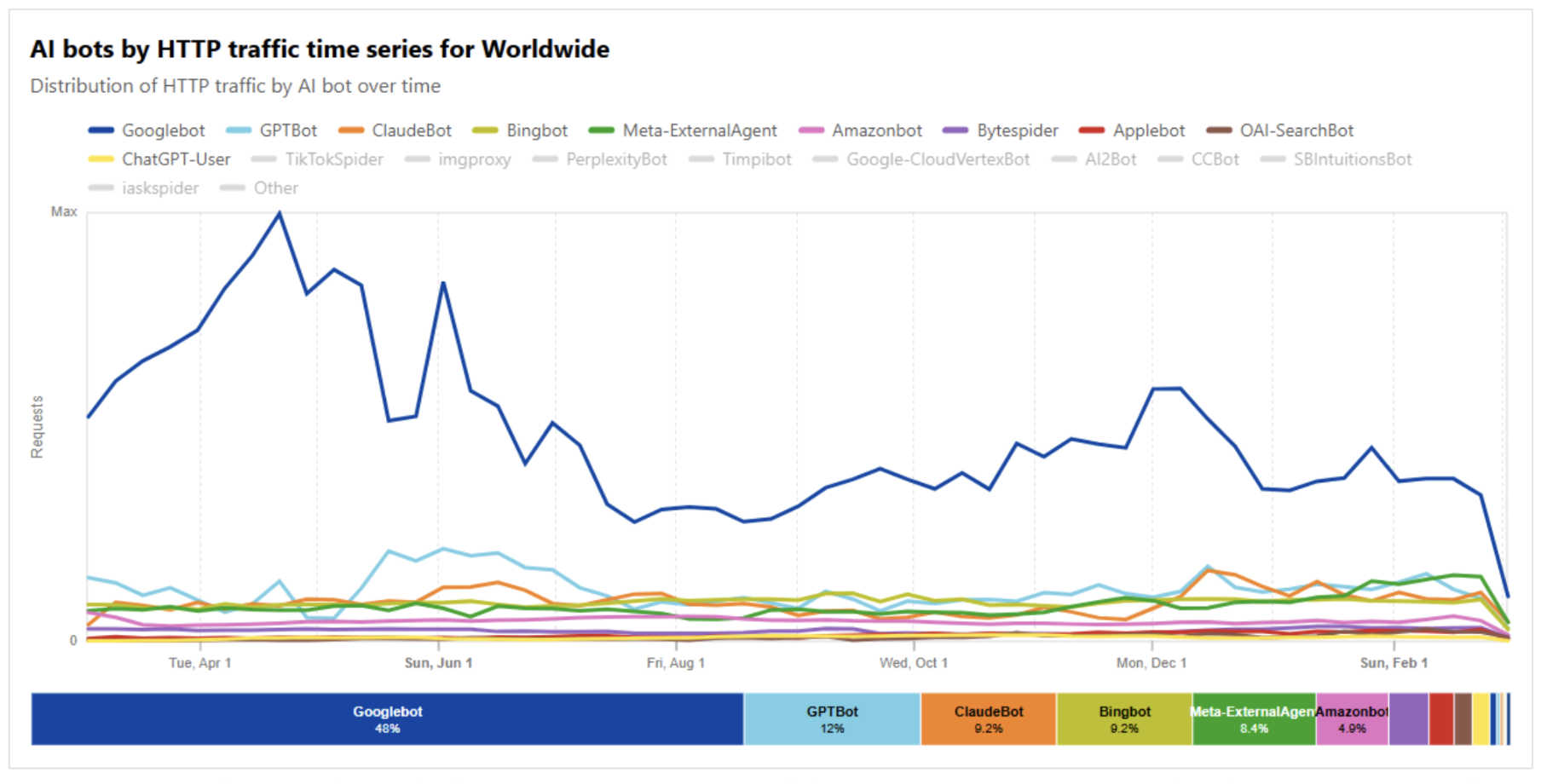

The numbers speak for themselves: according to Cloudflare Radar data over the last 12 months (March 2025 -> March 2026), GPTBot and ClaudeBot account for 12% and 9.2% of global bot traffic, respectively. ClaudeBot is now on par with Bingbot, and all three are just behind Googlebot (48%). In the space of a year, AI bots have gone from being minor signals to major players in web crawling.

Figure 1 — Distribution of bot traffic over 12 months: Googlebot 48%, GPTBot 12%, ClaudeBot 9.2%, Bingbot 9.2%. Source: Cloudflare Radar, worldwide.

However, when I talk to SEO teams, even those with senior-level professionals, the conclusion is often the same: we know that AI crawlers are out there, but we don’t really know what they’re doing with the data. And above all, we don’t know what to make of it.

This article is an attempt to clarify things: I share what I’ve observed in the field, with my clients, in logs, in robots.txt files, and in the sometimes erratic behavior of these new crawlers. The goal isn’t to scare anyone or sell a fantasy: it’s to lay the groundwork for understanding what’s actually happening.

Mapping the landscape: Who crawls what, and why

When people talk about “AI bots,” they often lump them all together, but that’s a mistake. Not all AI bots do the same thing, and confusing them can lead to poor decisions.

By analyzing the documentation from the major market players and reviewing the logs of dozens of websites over the past 12 months, it becomes clear that there are four main types of LLM bots, each with very different functions.

Training bots

Their mission: to crawl web content to improve their models. GPTBot (OpenAI) and ClaudeBot (Anthropic) are the most well-known.

They crawl on a large scale, often without any apparent logic for prioritizing content based on your site’s structure. OpenAI makes this clear in its documentation:

“GPTBot is used to crawl content that may be used in training our generative AI foundation models.”

This is a harvest crawl, not an indexing crawl.

Search bots

These bots are more similar to what Googlebot does: they crawl to index and identify potential sources of answers for AI search engines.

OAI-SearchBot (OpenAI), Claude-SearchBot (Anthropic), and PerplexityBot are among them. This is a fundamental distinction: blocking OAI-SearchBot in your robots.txt file could potentially cause your site to disappear from ChatGPT Search results. Blocking GPTBot means refusing to be used for training. These are not the same strategic choices.

Moreover, Claude-SearchBot is fairly new, and many media sites that restrict these types of bots do not yet block it. It’s important to stay vigilant in order to detect these new agents and decide whether to restrict them (or not) early on.

[Ebook] Mastering SEO in a query fan-out world

Fetch bots (on-demand)

ChatGPT-User, Claude-User, Perplexity-User: these bots retrieve data in real time, at a user’s request. When someone asks ChatGPT a question and the model needs up-to-date information, it sends ChatGPT-User to fetch the page.

Important note: as OpenAI points out,

“Because these actions are initiated by a user, robots.txt rules may not apply.”

In other words, these bots don’t necessarily follow your robots.txt directives.

Agentic bots

This is the newest and least-documented category. These bots behave like human users: they click, scroll, submit forms, and navigate interfaces.

We’re seeing them more and more in logs, and they raise new challenges in terms of detection and control. This is an area to watch very closely.

Today, when I conduct an audit, I no longer view “AI bot traffic” as a monolithic block. I segment it by type because the answer to the question “Should we block AI bots?” is never simply yes or no. It’s: which ones, to do what, and with what impact.

The myth of control: robots.txt, firewalls, and real-world reality

Many SEO teams think they’ve solved the problem by adding a few lines to their robots.txt file. If only it were that simple.

The robots.txt file only tells part of the story

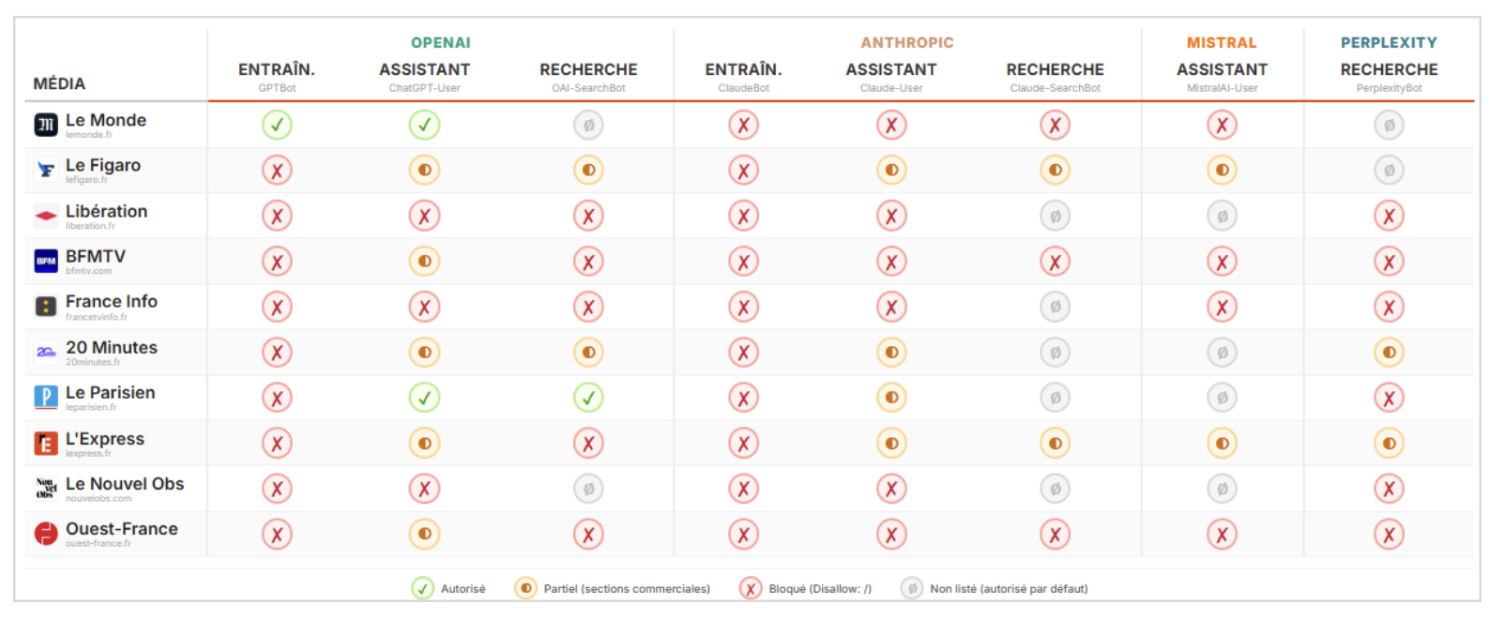

I analyzed the robots.txt files of a selection of French media and e-commerce sites. The conclusion is clear: there is no consensus.

Some block everything, others allow everything (such is the case for Le Monde as part of a partnership), and many haven’t implemented anything at all. Furthermore, those that do block bots don’t all block the same ones.

Figure 2 — Matrix showing the blocking policies of major AI bots on leading French media sites. Le Monde allows OpenAI as part of a partnership; most others block all bots or leave them unchecked.

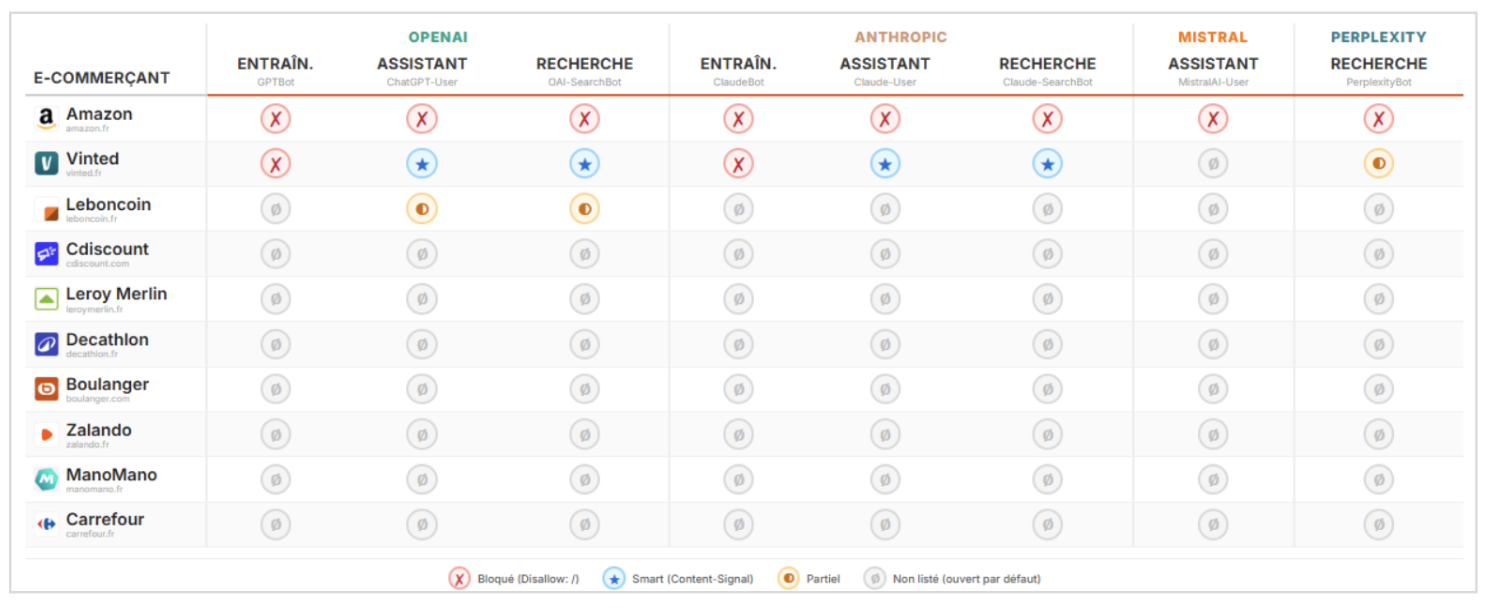

Figure 3 — The same analysis as above, but for French e-commerce sites: almost everything is open by default, with the exception of Amazon and Vinted (the first French e-commerce site to implement Content-Signal).

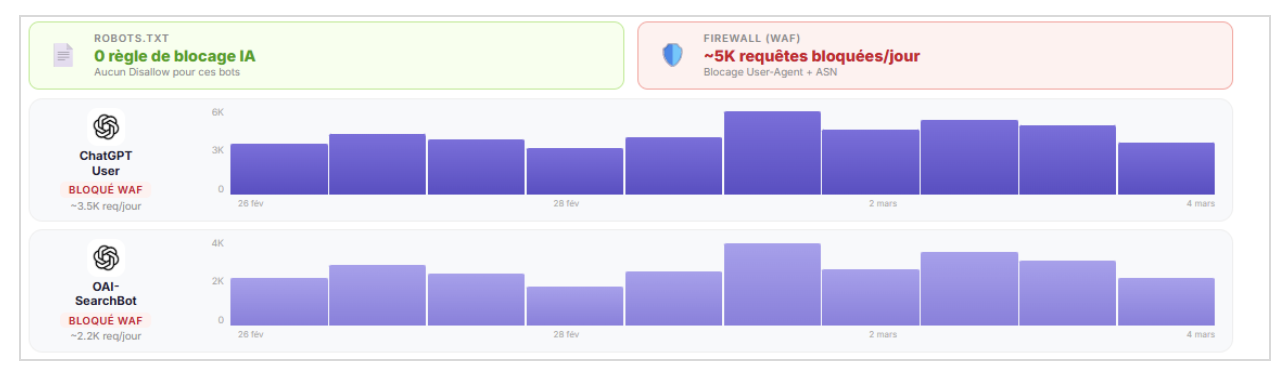

But the real problem lies elsewhere: for an e-commerce site I’m working with, everything was allowed in the robots.txt; there were zero restrictions. Yet, in the Web Application Firewall logs, it was clear that certain AI bots were being blocked.

The firewall detected and rejected the requests before they even reached the backend server. As a result, these blocks are completely invisible in standard server logs. If you only look at your Apache or Nginx logs, you’ll miss them.

Figure 4 — This e-commerce site has no AI-blocking rules in its robots.txt file, but approximately 5,000 ChatGPT-User and OAI-SearchBot requests are blocked daily at the WAF level and they are completely invisible in the server logs.

Compliance with robots.txt varies from case to case

On a French news site that I monitor, I noticed daily requests from AI bots targeting URLs that were explicitly blocked in the robots.txt file, returning 200 and 304 status codes. In other words: the bots access the site, the server responds, and the content is served. The robots.txt file is being ignored.

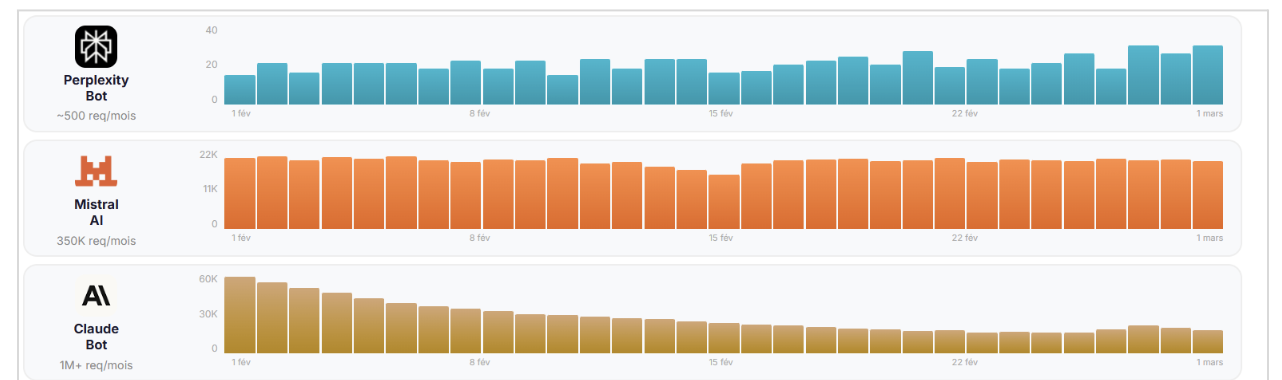

Figure 5 — Daily requests from PerplexityBot, MistralAI-User, and ClaudeBot to URLs explicitly marked as “Disallow” (status codes 200 and 304). Volume ranging from ~500 to >1 million requests per month. The robots.txt file is ignored.

Maxime Guernion also observed completely erratic crawling behavior. In one specific instance, ChatGPT-User was hitting a 404 URL it had made up more than 400 times per minute. That’s not smart crawling, that’s noise and it consumes server resources.

You can nevertheless adapt your robots.txt file using this tool before implementing more drastic restrictions with a firewall.

What happens when the control is deliberately bypassed

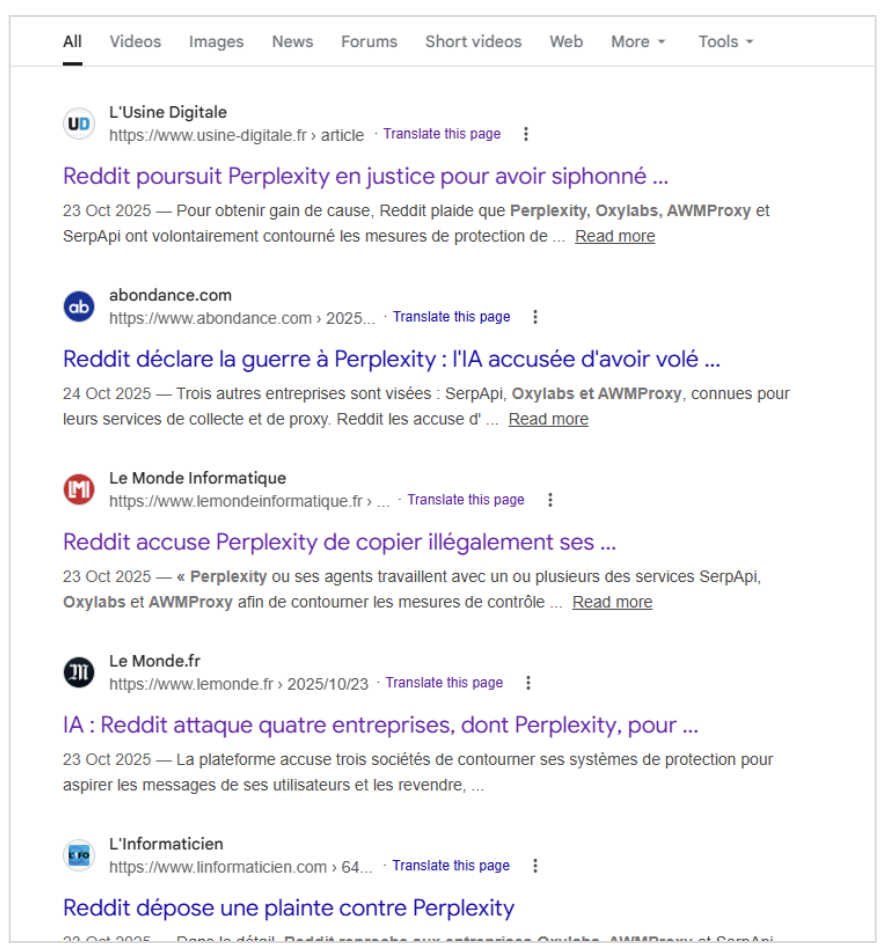

The problem isn’t limited to bugs or oversights. Perplexity has been called out for circumventing restrictions by using intermediaries. Investigations have revealed that between 500,000 and 2 million books were reportedly purchased, scanned, and destroyed to train the Claude model (Anthropic), also known as the “Panama Project.” This is a far cry from elegant compliance with the guidelines.

Figure 6 — Reddit sues Perplexity for circumventing security measures via SerpApi, Oxylabs, and AWMProxy (October 2025).

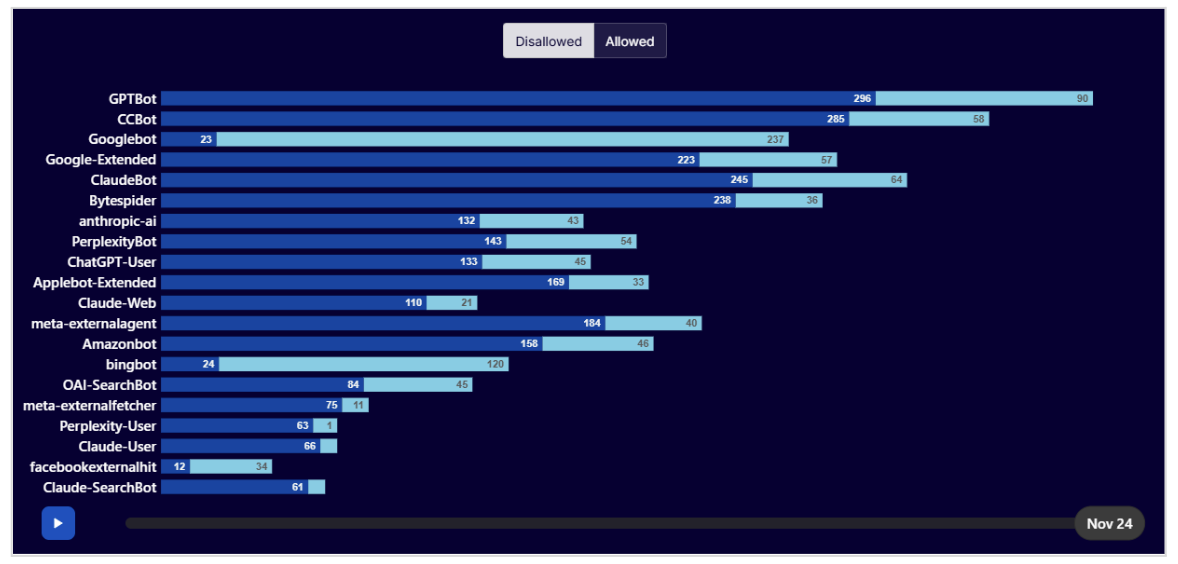

Key statistic: According to Cloudflare, in 2025, GPTBot was the most blocked bot in the world. Not Googlebot. Not Bingbot. GPTBot. Publishers are responding, but with whatever resources they have on hand.

Figure 7 — Ranking of bots according to Disallow vs. Allow rules in the analyzed robots.txt files. GPTBot leads the list, followed by CCBot, Googlebot, and ClaudeBot. Source: Cloudflare Radar 2025.

Emerging trends: Content-Signal, TDM, Pay-per-crawl

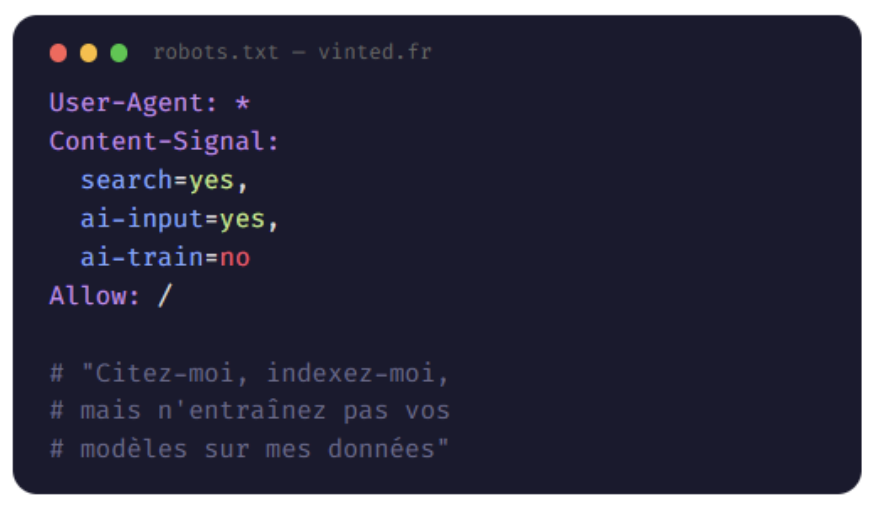

Several new initiatives are emerging in this area. Content-Signal is a proposed robots.txt directive (submitted to the IETF) that allows users to specify their usage preferences in a single line: training, citation, or research.

The TDM Reservation Protocol (W3C) allows publishers to reserve rights regarding Text & Data Mining, in accordance with the European CDSM Directive and the AI Act. Cloudflare has launched pay-per-crawl, a model where AI systems pay to access content.

Figure 8 — Example of a Content-Signal implementation (in this case on vinted.fr): one directive, three uses (search / ai-input / ai-train), instead of one line per bot.

Figure 9 — Example of a /.well-known/tdmrep.json file (TDM Reservation Protocol, W3C): reservation of rights regarding text and data mining in accordance with article 4 of the CDSM Directive.

While these approaches are interesting, none of them have yet become standard practice. Additionally, they don’t address the fundamental problem for an SEO: as long as we can’t accurately measure what’s happening, we’re flying blind.

What logs reveal (and what simulation tools don’t show)

This is the central point of my argument, and probably the one that will spark some debate.

Today, when we talk about “visibility in AI Search,” two approaches coexist. The first, which gets the most media attention, involves tracking predefined prompts. You can ask ChatGPT, Perplexity, or Gemini questions and see if a site is mentioned in the responses.

This approach is useful for benchmarking or for a broad overview, but it does not reflect the reality of what AI systems are doing on your site, at scale, on a daily basis.

The second approach, less glamorous but far more reliable, relies on real data: server logs and crawl data. This is the method I’ve always used for traditional SEO, and it’s the one that makes sense for AI search.

Why? Because logs don’t lie. They tell you exactly which pages were crawled, by which bot, with what response code, and how often (provided the firewalls let them through—something you should check beforehand).

When you cross-reference this data with your crawl metrics (depth, load time, word count, structured data), you begin to understand why certain pages are visible in AI search and others are not.

Real-life examples that you’d never make up

Here are a few things I’ve noticed recently, things that no simulation tool could have detected.

OAI-SearchBot gets lost in pagination

On a website dedicated to travel, without any technical or editorial changes, OAI-SearchBot suddenly began crawling non-existent pagination URLs, triggering a series of redirects.

The site hadn’t changed anything. The bot simply “got lost.” Without log analysis, this behavior would have gone completely unnoticed.

ChatGPT inventing URLs

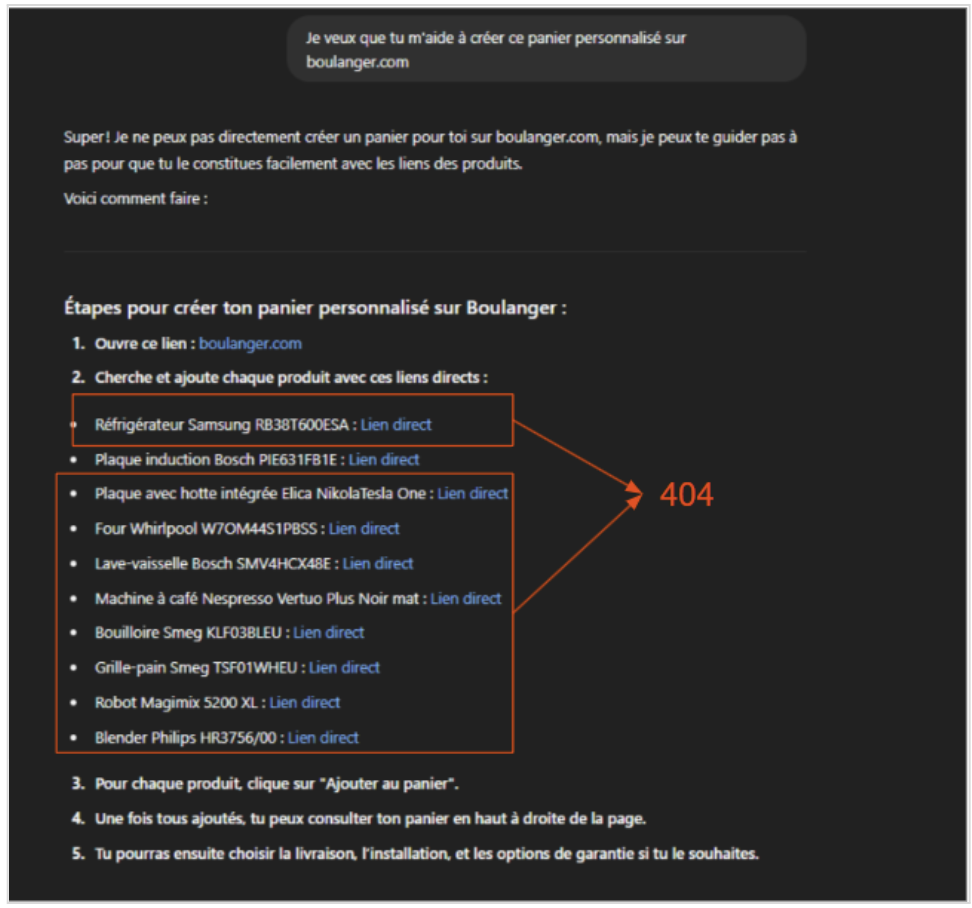

On the Boulanger website (a leading French consumer electronics brand), nearly all of the links provided by ChatGPT led to product listings that didn’t exist on the site. The model was hallucinating URLs.

For the user, this is a dead end. For SEOs, it’s a red flag. If AI mentions your site but sends users to 404 pages, you lose on all fronts.

Figure 10 — ChatGPT’s response on boulanger.com: all of the “direct” links provided lead to non-existent product listings (404).

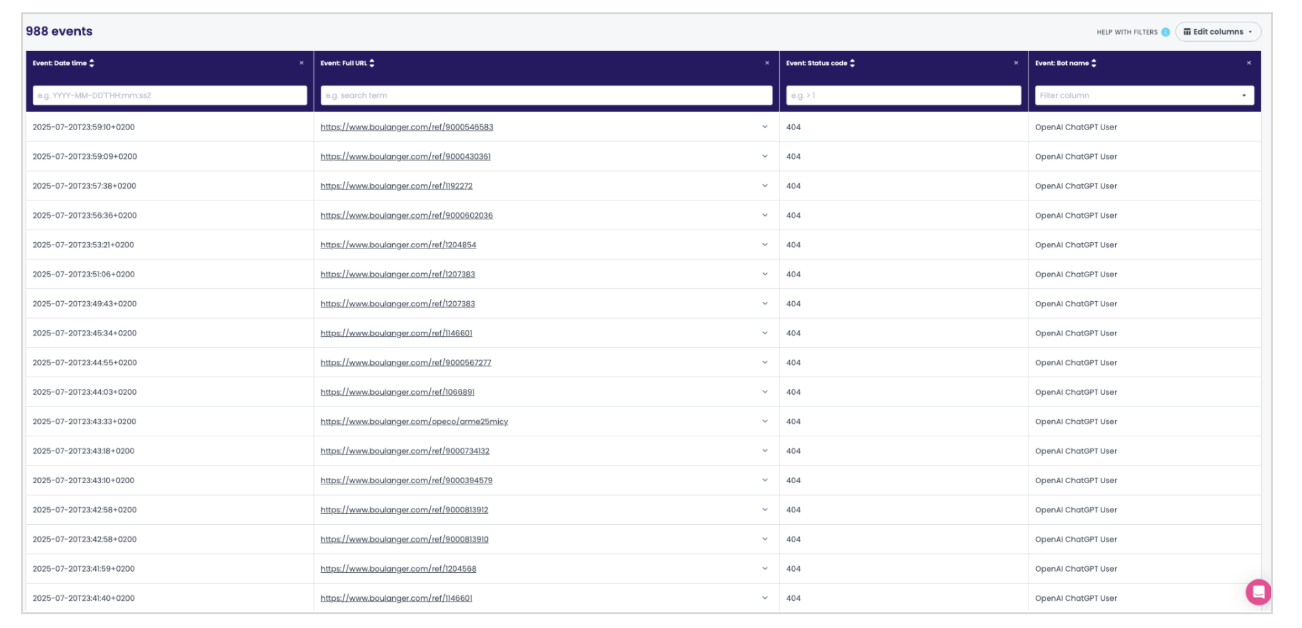

Figure 11 — View of the corresponding logs on Oncrawl: 988 ChatGPT-User requests resulting in a 404 error on boulanger.com, concentrated over a few hours.

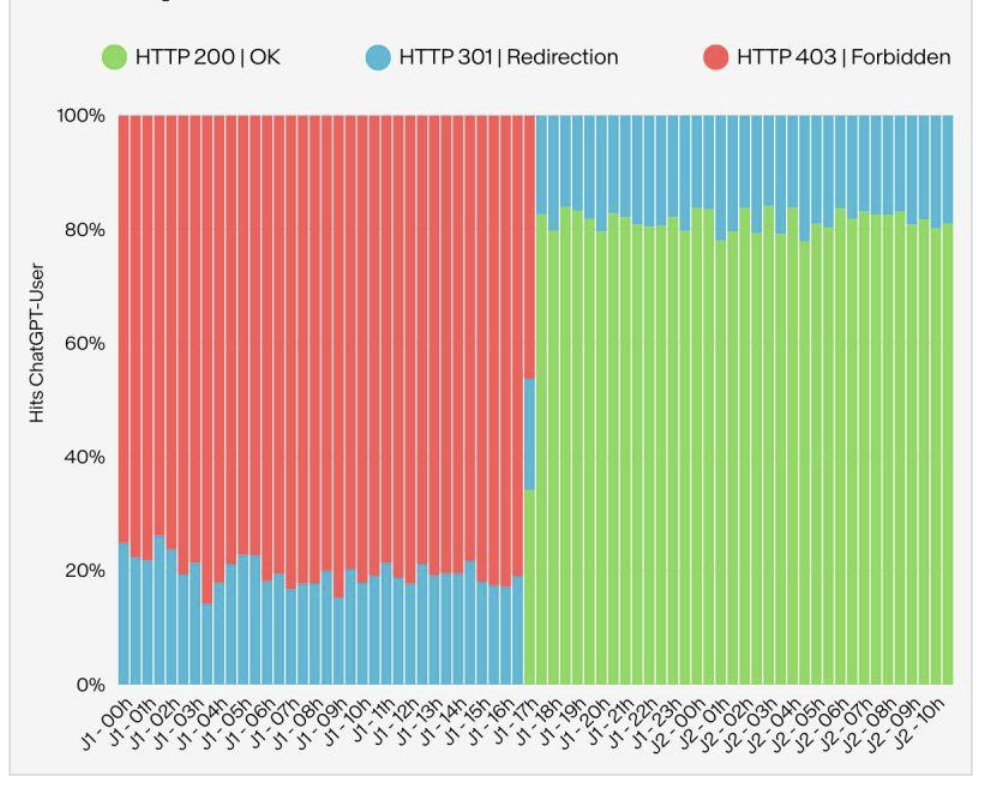

A firewall that blocks access without warning

On a multi-category e-commerce site, the firewall suddenly blocked ChatGPT-User. Overnight, the bot stopped crawling the site entirely. No one on the team had changed the rules, but the WAF had performed an automatic update. Without log monitoring, the team would never have known.

Figure 12 — A sudden shift in response codes for ChatGPT-User: 100% 403 errors for 17 hours, then a return to 200/301 as soon as the WAF rule was disabled. Nothing had changed on the team’s end.

These examples aren’t extreme cases, they’re situations I encounter regularly. They demonstrate one thing: to understand your site’s AI visibility, you need to observe what’s actually happening, not simulate what might happen.

What are the possible results? A clear assessment of the current situation

Let’s be honest: right now, traffic from LLMs remains low. Across all the websites I work with, 100% of them see AI-generated traffic in their analytics tools. But the actual share ranges from 0.1% to 1% of total traffic, with a median of around 0.4%.

That’s not huge and it’s important to state this clearly because the market is saturated with alarmist or overly optimistic talk about AI search. Usage studies show that French people use AI tools primarily for health, entertainment, and tourism (according to Havas Market, May 2025). We are not yet in a scenario where AI search is replacing Google for e-commerce or services.

However, volume isn’t the best indicator we should be looking at. Rather, we should be focusing on what we can understand. Which pages on your site are cited by LLMs? Which ones are completely ignored? Why does this product page rank higher than that one? Why does an AI bot crawl 500 pages of your site but only cite 12 of them?

One often-overlooked point is that AI relies on the web to acquire knowledge. If you already have technical issues with traditional SEO (load times, JavaScript, error pages, thin content, poor internal linking), you’ll encounter the same problems with AI search.

An interesting study conducted by the Résonéo agency highlights the particular ability of AI crawlers to execute JavaScript, and it is clear that they lag slightly behind Google’s crawlers and their Web Rendering Service.

GEO (Generative Engine Optimization) cannot be built on shaky technical foundations.

Moving from diagnosis to action: the right questions to ask

In the past, we had to analyze, monitor, and manage Googlebot. Today, we have to do the same for a multitude of AI crawlers, each with its own set of rules (or lack thereof).

The question is no longer “Should I care about AI search?.” It’s “Where do I start?” Here are the four questions I always ask when discussing this topic with a client:

1. What is my actual visibility in AI search?

Not an estimate, not a simulation. The actual volume of citations generated by AI bots (filtered for 200 and 304 status codes), by time period, by bot. That’s the starting point.

2. What’s my coverage?

Of all the pages on my site, which ones are crawled by AI bots for indexing? Of those, which ones are actually cited? And of the cited ones, which ones generate clicks?

This is a three-tier funnel: indexing, citation, traffic and the drop-off at each stage is revealing.

3. What affects my AI visibility?

When we cross-reference AI bot activity with crawl metrics (depth, load time, word count, presence of structured data, title length), correlations emerge.

Certain technical factors clearly influence whether content is cited by LLMs. Identifying them means we can take action to address them.

4. Where are my quick wins?

The most immediate opportunities are often “failed citations”: pages that AI bots have crawled for citation purposes but have no chance of converting because they return 4xx/5xx errors, have missing content, or contain thin content (fewer than 150 or 300 words). Fixing these pages delivers immediate ROI on AI visibility.

These four questions are exactly what I would expect from a serious monitoring tool. In fact, this is precisely what Oncrawl offers with its AI Search Lens feature: an analysis of AI visibility based on real crawl and log data, structured around these four pillars (visibility, coverage, impact, opportunities).

I find this approach consistent because it starts with what’s actually happening on the site, not what we imagine is happening.

We’re only just getting started

Do you really own your data? Legally speaking, you do, and increasingly so. In practice, however, if an AI really wants it, it can take it. It’s an uncomfortable truth, but it’s today’s reality.

AI crawling is in its chaotic early stages. It reminds me of the early years of Googlebot, when we were discovering its strange behaviors, inconsistencies, and bugs. The difference is that today we don’t have just one crawler to figure out, but a dozen, each with its own set of rules.

My advice: don’t panic, but don’t sit back and do nothing either! Start by gathering data. Talk to your IT teams, review your logs, and identify which AI bots are crawling your site, which pages they’re accessing, and what the results are. That’s the foundation. Everything else – blocking, optimization, and geo-targeting strategy – stems from this understanding.

SEO teams that invest now in understanding these new crawling dynamics will gain a head start. Not because AI search will account for 30% of traffic tomorrow morning, but because understanding how AI consumes your content means understanding the next layer of search. And that’s our business.