Editor’s note: This article has been updated to reflect developments in Google Search and SEO best practices.

Latent Semantic Indexing (LSI) has long been cause for debate amongst search marketers. If you Google the term ‘latent semantic indexing ranking factor,’ you will encounter both advocates and skeptics. However, as of 2025, there is a clearer consensus: while LSI is a real technique in natural language processing, Google has clearly stated it is not something they have ever used for search rankings.

The concept remains useful for understanding how language and meaning work, but it should not be mistaken for an active part of Google’s algorithm. If you’re unfamiliar with the concept, this article will summarize the history and debate on LSI, so you can understand what it means for your SEO strategy.

What is Latent Semantic Indexing?

LSI is a process found in Natural Language Processing (NLP). NLP is a subset of linguistics and information engineering, with a focus on how machines interpret human language. A key part of this study is distributional semantics. This model helps us understand and classify words with similar contextual meanings within large data sets.

Developed in the 1980s, LSI uses a mathematical method that makes information retrieval more accurate. This method works by identifying the hidden contextual relationships between words. It may help you to break it down like this:

- Latent → Hidden

- Semantic → Relationships between words

- Indexing → Information retrieval

How does Latent Semantic Indexing work?

LSI works using the partial application of Singular Value Decomposition (SVD). SVD is a mathematical operation that reduces a matrix to its constituent parts for simple and efficient calculations.

When analyzing a string of words, LSI removes conjunctions, pronouns, and common verbs, also known as stop words. This isolates the words which comprise the main ‘content’ of a phrase. Here’s a quick example of how this might look:

![]()

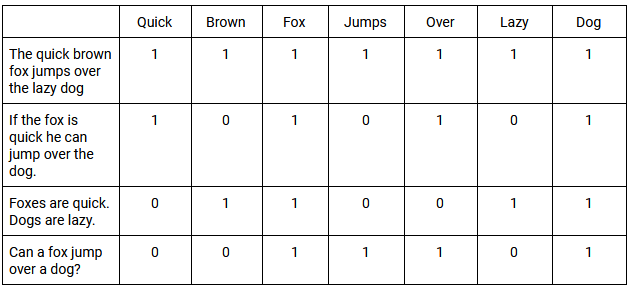

These words are then placed in a Term Document Matrix (TDM). A TDM is a 2D grid that lists the frequency that each specific word (or term) occurs in the documents within a data set.

Weighing functions are then applied to the TDM. A simple example is classifying all documents that contain the word with a value of 1 and all that don’t with a value of 0. When words occur with the same general frequency in these documents, it is called co-occurrence. Below you will find a basic example of a TDM, and how it assesses co-occurrence across multiple phrases:

Using SVD allows us to approximate the patterns in word usage across all documents. The SVD vectors produced by LSI predict meaning more accurately than analyzing individual terms. Ultimately, LSI can use the relationships between words to better understand their sense, or meaning, in a specific context.

[Case Study] Driving growth in new markets with on-page SEO

How did Latent Semantic Indexing become involved with SEO?

In its formative years, Google found that search engines were ranking websites based on the frequency of a particular keyword. This, however, does not guarantee the most relevant search result. Google instead began ranking websites they considered trusted arbiters of information.

Over time, Google’s algorithms filtered out low-quality and irrelevant websites with greater accuracy. Therefore, marketers began to realize they must understand the meaning behind a search, instead of relying on the exact words being used. This is why Roger Montti once described LSI as “training wheels for search engines” in an article about outdated SEO beliefs, adding that LSI has “little to zero relevance to how search engines rank websites today.”

In the years since, Google representatives such as John Mueller and Gary Illyes have been clear that Google does not use LSI.

The meaning of a search query is closely linked to the intent behind it. Google maintains a document called the Search Quality Evaluator Guidelines. In earlier versions, they introduced four helpful categories for user intent:

- Know Query – This represents seeking information about a topic. A variant on this is the ‘Know Simple’ query, which is when users are searching with a particular answer in mind.

- Do Query – This reflects a desire to engage in a particular activity, such as an online purchase or download. All of these queries can be defined by a sense of ‘interaction.’

- Website Query – This is when users are looking for a specific website or page. These searches indicate a prior awareness of a particular website or brand.

- Visit-in-Person Query – The user is searching for a physical location, such as a brick-and-mortar store or a restaurant.

These categories are still useful, but it is important to note that Google has updated the guidelines several times over the years. Today, evaluators look at more nuanced forms of intent and “needs met” ratings. Marketers should always consult the latest version of the guidelines for current details.

The theory behind LSI – defining a word’s contextual meaning within in a phrase – gave Google a competitive edge. However, the idea began to spread that ‘LSI keywords’ were suddenly a golden ticket to SEO success.

Do ‘LSI keywords’ actually exist?

Many publications remain firm advocates of LSI keywords; however several sources, including Google representatives, have been consistent in stating they are a myth. These sources point out several reasons:

- LSI was developed before the World Wide Web and wasn’t intended to be applied to such a large and dynamic dataset.

- The U.S. patent on Latent Semantic Indexing, granted to Bell Communications Research Inc. in 1989, expired in 2008. According to the late Bill Slawski, Google using LSI would be akin to ‘using a smart telegraph device to connect to the mobile web.’

- Google introduced machine learning systems like RankBrain, which transformed volumes of text into ‘vectors’—mathematical entities that help computers understand written language. RankBrain accommodated the web as a constantly expanding dataset, something LSI could not manage. Today, RankBrain is no longer referenced as a distinct system, having been absorbed into newer models.

Ultimately, LSI reveals a truth marketers should adhere to: exploring a word’s unique context helps us understand user intent better than keywords stuffed into content. However, this does not confirm that Google ranks based on LSI. It’s maybe better to think of LSI as a philosophy rather than an exact science.

Returning to Roger Montti’s quote about LSI as “training wheels for search engines,” we can now say that Google has long since taken those training wheels off. In fact, Google has developed far more advanced AI systems.

In 2019, Pandu Nayak, Vice President of Search, announced that Google had begun using an AI system named BERT (Bidirectional Encoder Representations from Transformers). This was one of the biggest algorithm updates at the time, affecting over 10% of all search queries.

When analyzing a search query, BERT considers a single word in relation to all of the words in that particular phrase. This analysis is bidirectional, in that it considers all of the words before or after a specific word. The removal of a single word could drastically impact how BERT understands a phrase’s unique context.

This marked a contrast from LSI, which omits any stop words from its analysis. The example below shows how removing stop words can alter how we understand a phrase:

![]()

Despite being a stop word, ‘find’ is the crux of the search, which we would define as a ‘visit-in-person’ query.

Since BERT, Google has advanced further with the introduction of MUM (Multitask Unified Model) in 2021, which helps search understand and connect information across languages and formats, and AI Overviews in 2024, which generate contextual summaries directly in search results.

These updates highlight that Google’s focus is no longer on keywords alone, but on a deeper semantic and contextual understanding of information.

So what should marketers do?

Initially, LSI was thought to be able to help Google match content with relevant queries. However, we now know that Google does not use LSI and that the debate surrounding “LSI keywords” is based on a misunderstanding. Despite this, marketers can still take many steps to ensure their work remains strategically relevant.

Articles, web copy and campaigns should be optimized to include synonyms and variants. This accounts for the ways people with similar intent use language differently.

But more importantly, marketers must continue to write with authority and clarity. This is an absolute must if they want their content to solve a specific problem. This problem could be a lack of information or the need for a certain product or service. Once marketers do this, it shows that they truly understand user intent.

They should also make frequent use of structured data. Whether it’s a website, a recipe or an FAQ, structured data provides the context for Google to make sense of what it is crawling and is increasingly important for rich results and for the new AI Overviews.

Finally, content strategies today must also take into account Google’s Helpful Content guidelines, which stress the importance of people-first content that demonstrates expertise, experience, authoritativeness and trustworthiness.

In short, while LSI may still be a useful academic concept in understanding language, it is not part of Google’s ranking systems, but in the end that doesn’t really matter. Marketers should focus on semantic SEO, intent-driven content, and modern AI-driven developments in search.