SEO means, for the most part, working with a large amount of data. A single website can have up to thousands of subpages, key words, and internal links to juggle. If that site is operating in a competitive industry, it will then need to incorporate external links from thousands of domains.

Think about what happens if you’re optimizing more than one website. The quantity of data grows exponentially: we can say you end up with some real Big Data.

Now, let’s add the Google algorithm variability, the unforeseen actions of your competitors, and disappearing backlinks to the mix. How do you manage all of that data?

As the industry evolves and we have more information to keep track of, conducting full site audits on a regular basis are becoming a thing of the past.

With limited time and resources, there has been a distinct shift from frequent audits towards site monitoring: a process now integrated into a more automated workflow. The difference with auditing and monitoring is that with auditing, you have to sort through the information by hand to determine where your site isn’t performing well or where there may be errors that have to be fixed. With monitoring, that information is delivered directly to you, saving you time and creating a more efficient process.

Photo by Luke Chesser from Unsplash

Monitoring

The market doesn’t like it when nothing is happening, and it’s no different in the SEO industry. All features and indicators relevant from the perspective of SEO can be monitored with the application of specialized tools.

Unfortunately, there’s no such thing as a one single tool to monitor everything. But, with the help of just a few apps, you can keep track of what’s important. You can also combine the data from several sources – but we’ll talk more about that later. Essentially, monitoring tools ensure that you receive the most important information via reports and alerts.

[Case Study] Monitoring and optimization of a website redesign following a penalty

Reports

Reports allow you to analyze the variability of individual values over time and they enable you to demonstrate the value of your SEO efforts. As important as they may be, your added value as an SEO is in developing and implementing a strategy, and not necessarily creating the reports.

Tools like Oncrawl can help you produce customized reports to keep track of your site’s health, manage site migrations or monitor search engine bots traffic and behavior. Below are some of the specific reports you can find in Oncrawl:

Trends for a given metric or KPI

Track and report the metrics that matter to your business and how they vary over time, be it backlink growth, organic traffic, status codes or duplicate content. You can select the metrics you wish to track and customize how your dashboard reports.

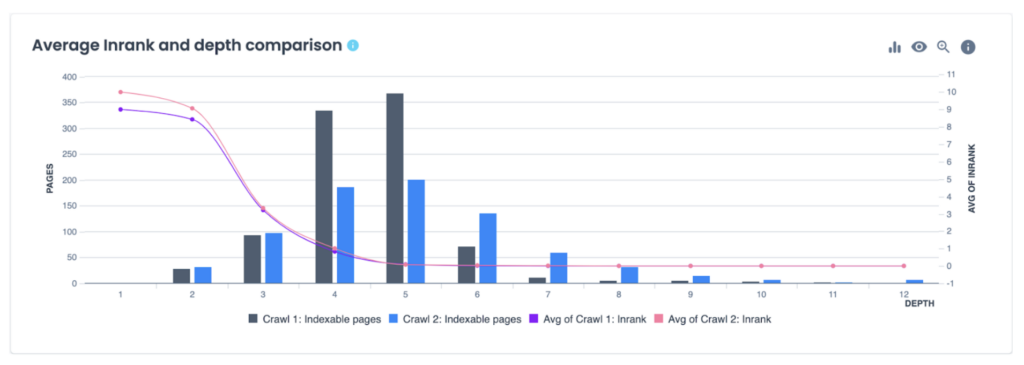

Comparison at two points in time

A crawl over crawl allows you to compare the results of two crawls to show you the impact of any changes to your site. It can be extremely useful for tracking changes over time, for site migrations, or for monitoring site modifications.

Aggregate reports

In order to fully monitor on-page and off-page SEO as well as your site’s infrastructure, it’s beneficial to use multiple tools (tools we will examine in more detail below). Configuring alerts in each of your chosen tools ensures that you’re protected against missing any important changes on your website.

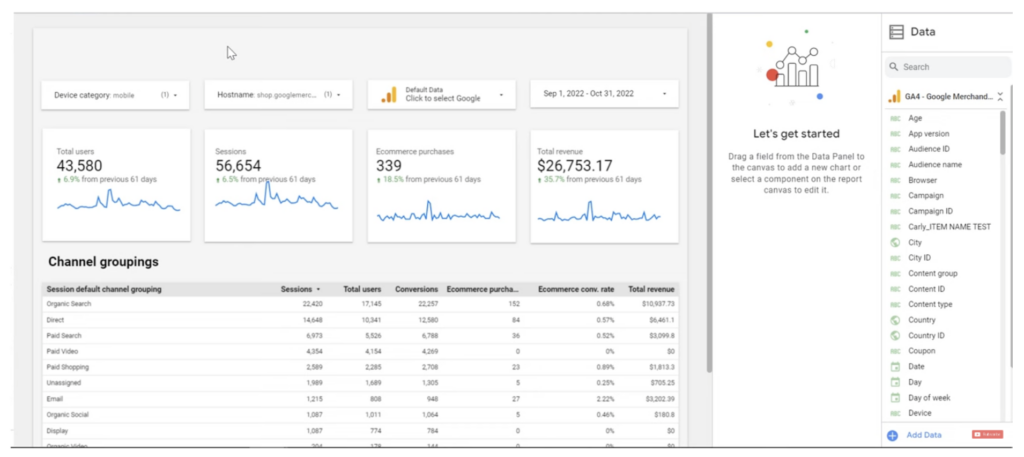

But what about the reports? Logging into several different applications day by day and comparing the data in separate windows can be extremely tedious. Fortunately, most of the monitoring applications share their data via API – and you can combine, or aggregate, several data sources in a dashboard format.

One common place to aggregate data is in Google Looker Studio.

Alerts

Alerts are important for monitoring as they immediately inform you of any significant changes made to your selected features and indicators. Staying up-to-date on what changes may impact your SERP rankings or customer experience are crucial to your SEO success.

The importance of alerting thresholds

As your business and website are unique, so too will be the metrics you choose to monitor – and the sensitivity of the alert. Thresholds help you adjust the sensitivity of an alert. For example, you might want to be alerted if more than a certain number of your money pages have page speed issues, but just one page loading more slowly than usual might not be cause for concern.

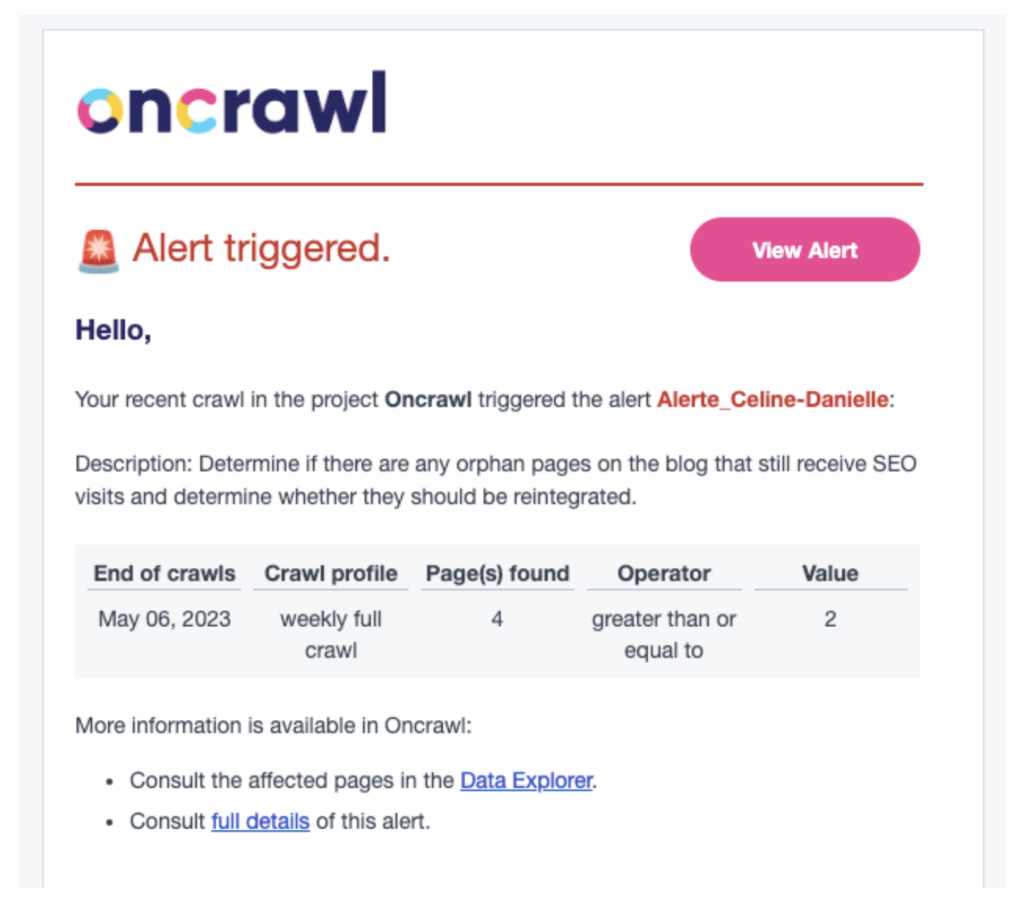

If you’re using Oncrawl, alerts in the Oncrawl app trigger based on fully customizable conditions. You’re in charge of choosing what you monitor and setting a threshold that adjusts alert sensitivity exactly the way you need it.

Who can receive alerts

Send alerts to the right people, even if there are a handful of them. Regardless of the size of your team, key stakeholders, or even developers, should be kept informed about what’s happening on your website.

Alert channels

Alerts can be sent via multiple channels such as communication tools like Slack or Microsoft Teams, so that groups get a single notification.

Alert frequency

The frequency of your alerts can make a big difference in whether you can react in a timely manner or not. Adjust the frequency of any monitoring that you set up; you might want to receive alerts twice a day, daily, weekly…whatever makes sense for the KPI you’re tracking, for your team and for your objectives.

What can (and should) be monitored in SEO?

Website infrastructure

The fact that you own a website (or your customer owns it) and you have access to the CMS and all of the configurations doesn’t mean that you know everything there’s to know about it. It’s very easy for you to miss important problems which have an impact on more than just the SEO.

Availability (uptime)

If the website doesn’t work, you know what happens – it won’t convert anybody, and it may even discourage a user from choosing a given brand. If the problems persist, it may even result in deindexing.

Correct functioning

A glitch in an important function of a website is a silent killer of conversion. Let’s say for example that your website is operational, but you can’t place an order because the button at the last step of the purchasing process doesn’t work. Sure, you’ll see the effects in the form of a drop in sales, but it’s much better to detect a problem and fix it before you start losing money.

Domain and SSL certificate expiration

As we all know, domain name registrars and SSL certificate issuers are very glad to remind you that you need to renew your subscription. However, things can happen, especially if you’re using multiple email inboxes and aliases. Sometimes you can miss such a notification: an additional reminder from an external monitoring resource won’t hurt anyone.

Being present on blacklists

A red warning screen displayed by the browser instead of the website itself is a situation which everybody would prefer to avoid. This most often means that a website’s been infected by malware and has become a threat to the users. In such a situation, you must react immediately so that as few users as possible encounter such a message.

Robot blockers

If you’ve never experienced this, feel free to cast the first stone. Imagine that the draft version of a website is transferred to production, along with the robots.txt file including the search engine robot blockade. Or, maybe the X-Robots-Tag in the HTTP header, which is invisible at first sight. It’s better to detect such a mistake before Google updates the index as per our own “request”.

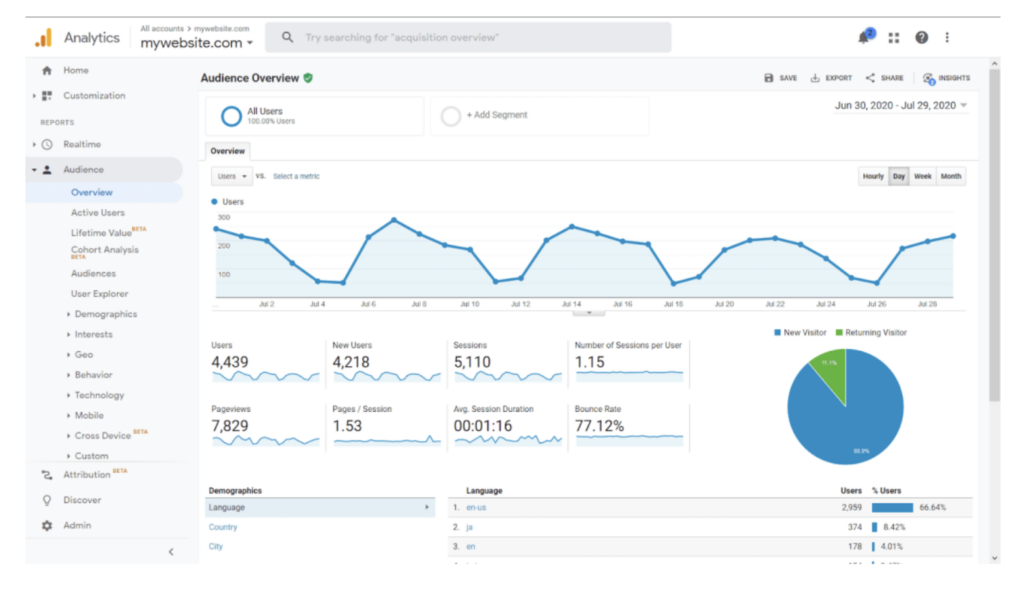

Google Analytics

On-site technical SEO monitoring

Technical SEO should be a collaborative effort; find red-flags when other people work on your website and prevent regressions.

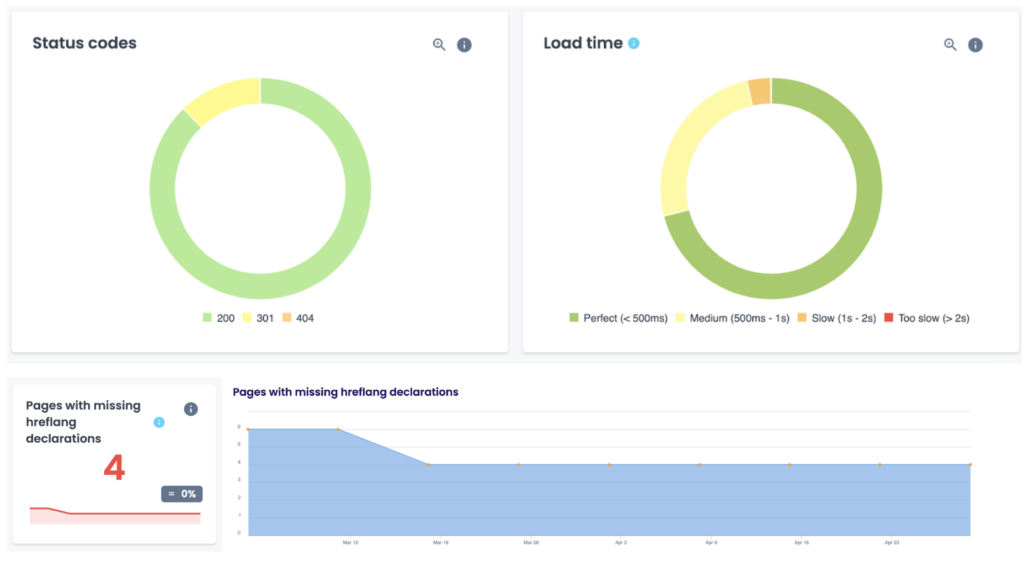

Pages returning a 404 status code

A 404 error indicates that the server was unable to retrieve anything that corresponds to what a user requested. This typically happens when a site’s content has been removed or moved to another URL. Broken links can hurt your user experience as well as create an overall high error rate for your site if you have too many.

5xx errors

A 5xx error is a code that appears on internet browsers when the server has failed to fulfill a request. There are different 5xx server codes that identify specific issues; e.g. 500 = Internal Server Error. It’s essential to rectify 5xx errors quickly as they can lower a page’s ranking or drop it from the index altogether.

A noindex home page

A noindex tag is an on-page directive that instructs search engines not to index a particular page. If search engines can’t index your homepage, then it won’t be ranked in the SERPs. Iif no one can find your site in the search results, that’s not going to be good for business.

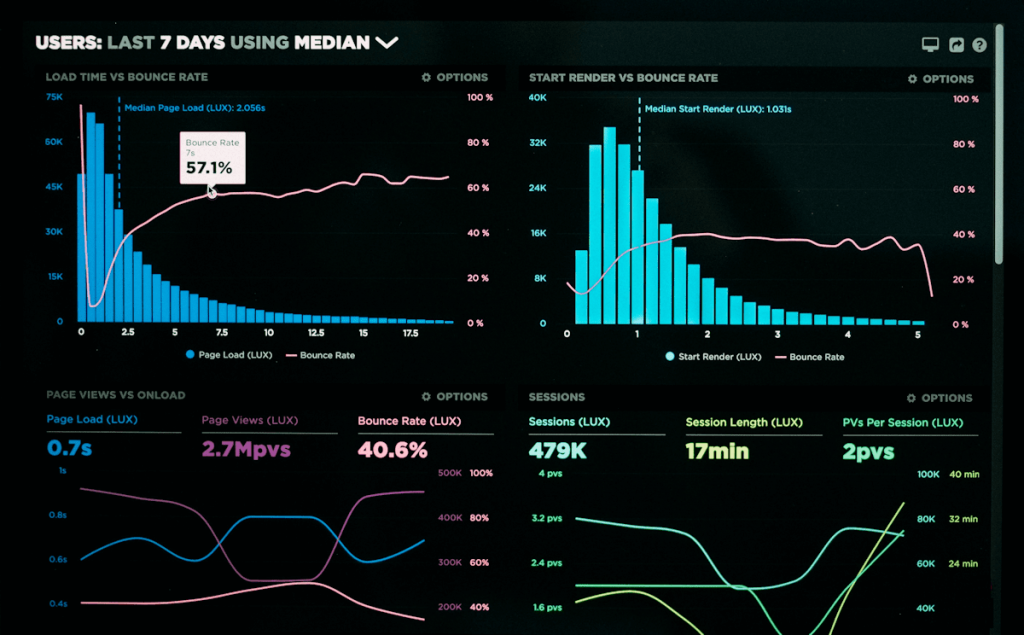

Loading speed

After a website’s been optimized for speed, you can’t just forget about it and move on. After all, it would only take one person publishing a huge bitmap on the main page to ruin all your work. You should detect such situations quickly in order to rectify them as soon as possible.

The organic traffic on the website

Keeping an eye on the flow of organic traffic, particularly the bounce rate and the conversion rate, is something you probably already pay attention to. However, do you monitor it regularly?

Orphan pages

Pages that exist, or are indexed, but aren’t incorporated in the structure of the website send conflicting signals to search engines about their importance. This often causes them to miss out on ranking and traffic opportunities. The existence or creation of orphan pages is a key element to monitor for many SEOs.

Off-site monitoring

You likely observe the external results of your actions, but observing isn’t the same as monitoring. It’s good practice to regularly check the reports and to receive alerts at key moments – instead of looking at the results all the time. What are some metrics you should be monitoring?

SERP position

The basis for determining the effects of the SEO activities, and often – of the amount of remuneration for the SEO services. This is very difficult to track on your own, not so much due to the fact that many repeating queries are blocked by Google, but rather to the extensive personalization of the search results and the constant appearance of new snippets changing how the SERPs look.

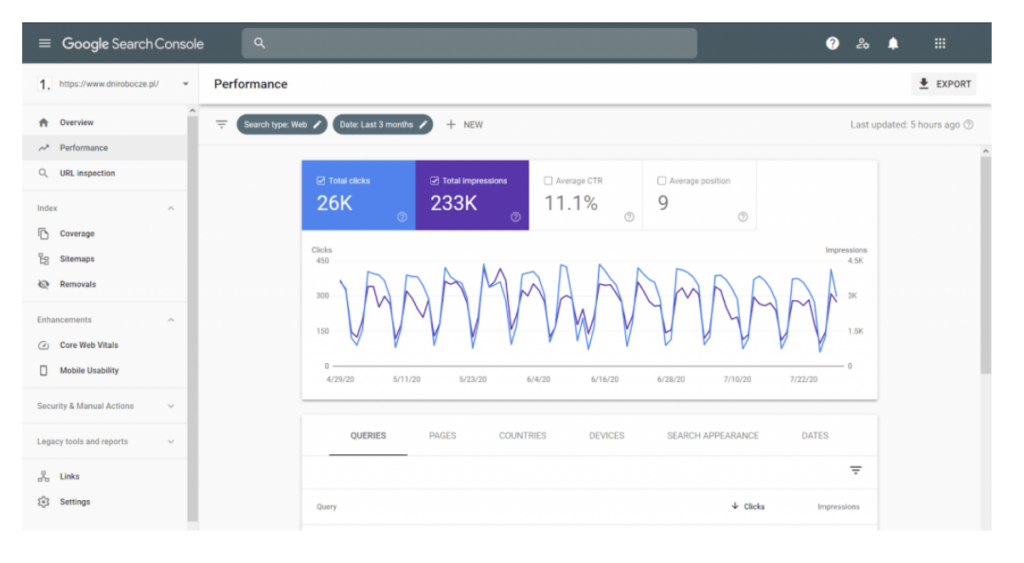

Google efficiency

The number of SERP views, CTR and clicks, the crawling speed and errors. First-hand data, meaning data directly from Google and the Google Search Console.

Other indicators

Such as Trust Flow (TF) or Citation Flow (CF) are used mainly to determine the value of websites as potential link locations. But, in this instance, it’s also worthwhile to monitor how the optimized website is performing.

Backlinks

The SEO “currency”, the direct result of content marketing and many other activities. Some are worth their weight in gold, others aren’t worth as much. The need to monitor the backlinks is manifested on two levels:

- The general – numerical/qualitative one.

- The detailed one – which means tracking the specific acquired links – if they haven’t been deleted or edited (e.g. by adding the “nofollow” attribute).

Google Search Console

The tools

The most important tool to track the effects of the SEO activities is the above-mentioned Google Search Console. Unfortunately, it doesn’t offer configurable alerts – notifying, for example, about a decline in the SERP positions. Of course, GSC does send various types of alerts, but it does it in an entirely independent way.

Google Analytics is also very important – or alternatively, any other package used for tracking traffic and user behavior on the website. You can define your own alerts and get notifications here, when something unusual happens to the traffic on your website.

Majestic is a source of very valuable data regarding a SEO-optimized website (this including the links leading to it) that has its own index built in exactly the same way as the Google index.

Some of the advanced SEO platforms – like Oncrawl for example – offer continuous monitoring of the overall health and technical SEO of a website. The service tracks various metrics collected by crawling and log analysis. Oncrawl’s alerting system offers a lot of flexibility and customization around defining precise or complex metrics, thresholds, notifications, and frequency of alerts.

Monitoring: a necessity for effective SEO?

With so much data to track, monitoring has become an essential part of any SEO strategy. Monitoring is the most effective way to help you figure out what is currently working for you and what you need to improve in order to attain higher rankings and optimize the user’s experience.

It’s important to take the time to find the right mix of tools to monitor your site, but you should be monitoring and using alerts for the main elements in your site’s infrastructure, technical SEO performance, and off-site SEO. Work smarter and have the information delivered right to you.