SEO is a universal language. Therefore whether you’re working on a US domain or a Danish domain the technical quick wins will have the exact same influence on site performance.

It’s all about having no errors and being as user friendly as possible. Search engines like Google keep increasing their focus and the ranking factors towards technical SEO and the overall page experience.

Therefore when we (my company) begin a new roadmap for a client the first thing we do is to make sure that the technical parts of a website work.

What is the Health Score and how do we improve it if it needs improvements?

Health score can show huge errors. This was the case for me when I began my work for a Danish client earlier this year.

But with some quick win optimization I boosted their performance for the whole site which have increased both rankings and traffic (a lot).

And this is what this case study is all about – increasing health score and rankings by focusing on the technical quick wins. Quick wins that you can do yourself without even being a web developer.

…And I’m going to tell you how you can succeed in every niche with some go to steps.

I’ll walk you through

- The case

- The results

- What we did

- Why we did as we did

- Key takeaways for you to remember

The case: SEO challenges in the affiliate industry

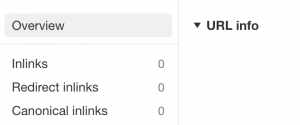

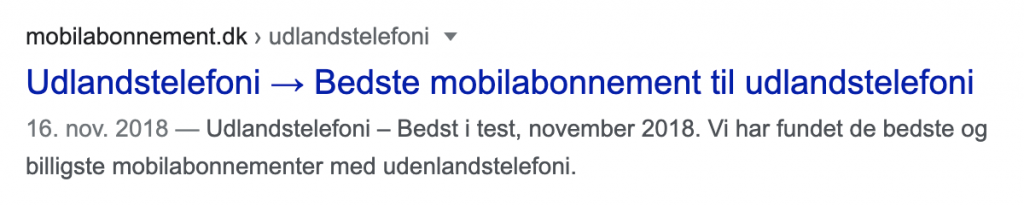

Our client, Mobilabonnement.dk, works in the affiliate industry comparing mobile providers.

Working in the affiliate industry, a lot of tactics are being used all the time – some more black hat than others – and this can have a really negative impact on the rankings.

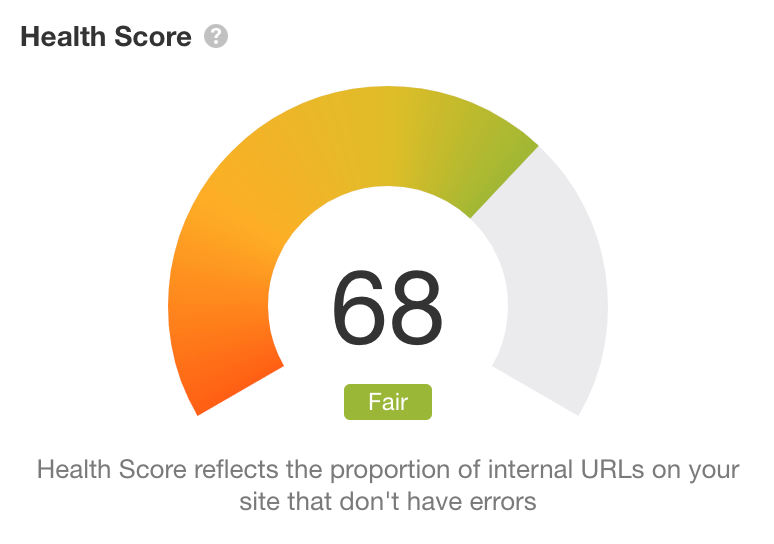

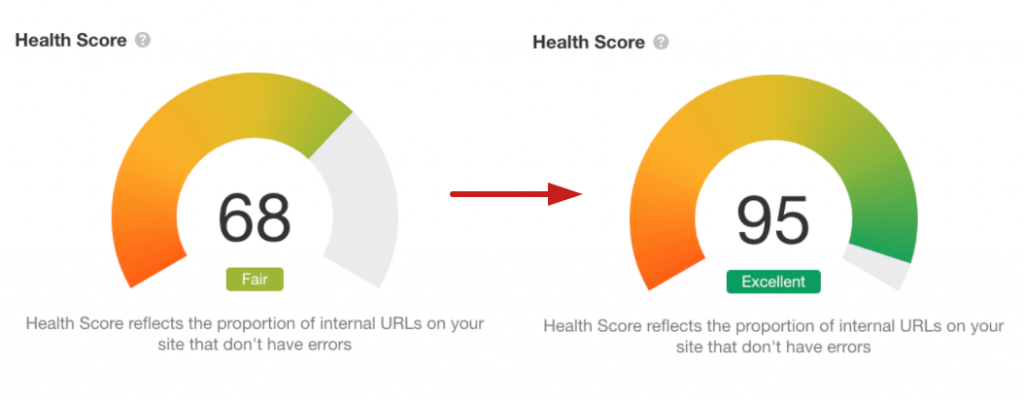

Because of this, we began our work our client had lost a lot of important rankings and traffic – and the Health score was down to 68.

68 is not that bad – but it’s definitely also not good if you want to be at the very top of the industry. I think that’s why a SEO tool such as Ahrefs uses “fair” to describe the number.

So the plan was clear: we needed to fix these issues before thinking about content and link building. Because without a strong technical foundation there is really nothing to grow on.

The results of tackling technical issues for site health

During this campaign we have seen really great results. The most important ones include:

- Going from 701 errors to only 172.

- Health score going from 68 to 95.

- +366 keywords increased during the next month after the technical improvements.

- Organic traffic has overall increased with +209% – and it wouldn’t have been possible with a Health score of 68.

What we did to address technical challenges

So before I begin, let me start by saying that everything we did was based on this particular website and its technical challenges. Nevertheless, these issues are very common which is why I want to share it to help your website in future work.

Later on in this case study I will show you data with outputs from the SEO tool, Ahrefs. This was the tool I have used to obtain these results. You can use a different tool, such as Oncrawl, for these steps but whatever tool you choose, I recommend using only one tool at a time.

Ready? Here we go.

[Case Study] Increase visibility by improving website crawlability for Googlebot

The site audit

So the very first thing we do with every website we optimize is a technical site audit. In order to rank we need to look at the Health score and see the numbers – even before content and link building begins.

When analyzing Mobilabonnement.dk we saw a Health score of 68:

So we immediately knew that something was wrong and it needed to be fixed.

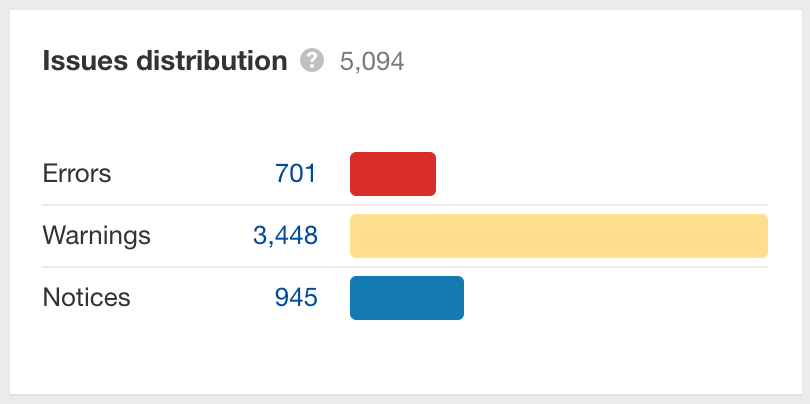

In Ahrefs you’ll find 3 types of issues. These include:

- Errors

- Warnings

- Notices

At this point Mobilabonnement.dk had 701 errors, 3.448 warnings and 945 notices.

As you probably know the errors are the biggest issues and therefore threats. Therefore this was our priority to fix.

And since we don’t have all the time in the world as SEO’s we of course started with the low hanging fruits = the errors.

So in front of us we had errors concerning:

- 404 pages

- HTTPS pages having internal links to HTTP

- Pages that had no outgoing links

- Orphan pages

- Redirect chains

- Missing Meta descriptions

- Duplicate pages without canonical

- Images files the was too large of size

By the end of the optimization I had fixed almost every of the above without the help of a web developer.

So here is how I did it.

Fixing 404 issues

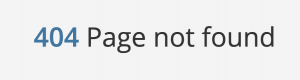

404 is also known as a ‘broken page’ (not found). It’s one of the most common 4xx errors that indicates that a URL does not exist.

Therefore if you have a link on your website to a 404 the link will be broken and this indicates a “dead end” for your users. This is something that damages the user experience which is why Google looks at this as a technical ranking factor.

The goal will be to have no 404 issues on your site.

To fix the issue we therefore listed all the 404 URLs on the website and found all the internal links pointing at them. This we could do by exporting the site audit output.

Here we analyzed all the internal outgoing links and either removed them or replaced them with a live page – a live page that had a relevant context and value for the URL we would be linking to.

If you can’t do any of the above you can then create 301 redirects from the 404 pages.

HTTPS pages having internal links to HTTP

This was the issue having most errors. So this was a top priority.

The thing about HTTP URLs is that they indicate an insecure page. So what we saw was pages having 489 internal links to HTTP URLs. Something that can harm both authority and trustworthiness on a site.

The reason behind all these errors was a lot of content that hadn’t been updated for years. Therefore we saw a huge amount of internal HTTP links.

![]()

To fix this issue, we listed all the internal outlinks that went to HTTP URLs. From here we corrected the HTTP URLs to HTTPS versions. This solved the problem.

Pages that had no outgoing links

This one is one of the really low hanging fruits.

If your site has no outgoing links the page will be a ‘dead end’ for both your users and the search engine crawlers.

We created a list of every page having no outgoing links and made sure that the pages had at least one outgoing link that leads users through to another important page on the website.

Fixing orphan pages

Just as some pages may have some ‘dead ends’ some pages have no internal links pointing at them. This is an issue because then it can be difficult for both users and crawlers to know these pages even exist.

Therefore we revised the website navigation and made sure that all important pages had a link to them and became accessible for users and crawlers.

Redirect chains

When doing the site audit we found some redirect chains, which could slow down the page loading speed. A redirect chain is a series of redirects on your site that go from one URL after another. This forces users and search engines such as Google to wait until it can’t find anymore redirects.

We looked at the total number of internal links to see the pages having redirect chains. If possible we replaced the redirecting URLs with a direct link to the main destination URL. By doing this we made sure that the search engine and users didn’t have to wait. This improved the user experience on the page.

Missing meta descriptions

Missing meta descriptions is a SEO 101 to fix. Meta descriptions are actually not a direct ranking factor (or at least not a very big one) but they can indirectly influence rankings since they influence the click-through-rate (CTR) of a site.

All the pages we found having missing meta descriptions didn’t fulfill their SERP (Search Engine Result Pages) potential. Without any meta descriptions, we were relying on Google’s ability to rewrite what our page was about, and the competitors would be more likely to have a higher CTR.

Working from a list of pages missing meta descriptions, we provided meta descriptions for every indexable page on Mobilabonnement.dk. This helps users understand what the pages are about.

Duplicate pages without canonical

This one is about pages with either duplicate content or very similar content. This can confuse search engines, who can’t know which page to rank for a given keyword.

If you have pages that are very similar, it can help to add a “rel=canonical” attribute that points to the page you want the search engines to rank.

Tools like Ahrefs and Oncrawl provide a report with this information. You will get an exact overview of the pages having duplicate issues.

This was the exact thing we did and from here we created a canonical attribute that pointed to the most important pages. This helps the ranking process immediately.

Too large image files

The last error showed image files that were too large. This is something which is very common in the SEO world and something that should be fixed. Having too large image files can slow down the page speed.

To fix this problem, we exported the images and compressed the files using an online compression tool. This reduced the image file sizes significantly and therefore helped the overall page speed of the pages by reducing the amount of content that had to be downloaded and rendered to display the page.

This was the last error I fixed in this campaign.

In the end I had used hours of work. But having a SEO tool for the site audit helped me automate the process – a process that could have turned into days of work.

And the results talk for themselves. During one week the Health score went from 68% to 98%:

Why we did what we did: prioritizing SEO issues

This process could perhaps have been done more quickly if we had had access to a web developer, but that is not the point in this case.

Today, we live in a time where a lot of companies aren’t capable of hiring their own developers due to the economy. I wanted to show you that these quick wins can be a way to improve site performance for a startup company.

But since anyone in SEO is working with a lot of different projects, you obviously need to prioritize your time. Therefore I will always recommend fixing the errors first – before warnings and notices. Errors that can harm your ranking process a lot will also benefit performance the most when being fixed.

The optimizations I discussed in this article concern the errors I saw for our client. Perhaps you’ll see something different when it’s your turn to fix a website but at least you have some inspiration in order to fix the issues.

Take my advice and fix the errors. Only afterwards you can focus on the other issues.

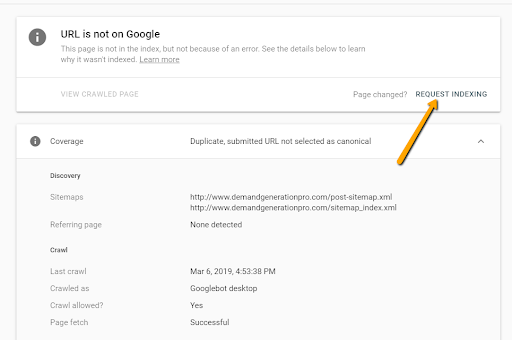

As a last hack, remember that if you have made any important changes to a page it can be a good idea to request indexing in the Google Search Console tool (out of service at the moment but will soon be back).

Key takeaways

In the world of technical SEO, the issues can differ for each site. Nevertheless the errors shown above include some of the most common issues.

By using a technical SEO tool that helps you run a technical site audit, you’ll gain insight in the exact pages needing technical optimization.

I know technical SEO is only a part of your SEO efforts, but it should be the first thing you optimize. Because without technical health you won’t be able to rank on search engines like Google.

If you’re going to do the technical optimization yourself I can only recommend to keep your eyes on the quick wins to begin with. You’ll gain quick results without doing a lot of work (though of course it depends on how many issues you have).

Furthermore, make sure to export all of your findings to a sheet for each project. This will help your overview and make it accessible for you both now and in the future.